The Hidden Cost of Accuracy: How CGM Overcalibration Accelerates Sensor Performance Degradation and Impacts Clinical Research

This article examines the critical, yet often overlooked, phenomenon of continuous glucose monitor (CGM) performance degradation linked to overcalibration practices.

The Hidden Cost of Accuracy: How CGM Overcalibration Accelerates Sensor Performance Degradation and Impacts Clinical Research

Abstract

This article examines the critical, yet often overlooked, phenomenon of continuous glucose monitor (CGM) performance degradation linked to overcalibration practices. Tailored for researchers, scientists, and drug development professionals, we synthesize current evidence to define overcalibration, elucidate its electrochemical and algorithmic mechanisms on sensor drift, and quantify its impact on key performance metrics (MARD, precision, longevity). We provide a methodological framework for optimal calibration protocols, troubleshooting strategies for anomalous data, and a comparative analysis of sensor susceptibility across platforms. The conclusion underscores implications for clinical trial integrity and biomarker validation, advocating for standardized calibration guidelines in research settings.

Defining the Problem: The Science Behind CGM Overcalibration and Sensor Drift

Technical Support Center: Troubleshooting & FAQs

Troubleshooting Guides

Issue: Erratic Sensor Readings Post-Calibration Symptoms: Post-calibration glucose readings show high variance (>20% MARD) compared to reference, or trend arrows are inconsistent with blood glucose monitor (BGM) values. Potential Causes & Solutions:

- Cause: Incorrect calibration timing (during rapid glucose change).

- Solution: Calibrate only during stable glucose periods (rate of change < 2 mg/dL/min). Wait at least 15 minutes after confirmed stable conditions.

- Cause: Overcalibration (excessive manual entries).

- Solution: Adhere strictly to manufacturer's calibration schedule (typically 1-2 times per 24h for research-grade sensors). Document every entry to track frequency.

- Cause: Compromised reference sample (e.g., contaminated strip, insufficient blood).

- Solution: Use a calibrated, clinical-grade BGM. Wipe away first blood drop, use second drop. Ensure hands are clean and dry.

Issue: Accelerated Sensor Performance Degradation Symptoms: Sensor sensitivity (nA/(mg/dL)) declines precipitously before expected end of wear, signal dropout, increased noise. Potential Causes & Solutions:

- Cause: Overcalibration-induced electrochemical perturbation.

- Solution: Implement the "Minimal Calibration Protocol" (see Experimental Protocols). Compare sensor output against a single, high-quality reference per 24h window for drift assessment only.

- Cause: Localized inflammation or biofouling exacerbated by frequent calibration prompts/skin handling.

- Solution: Rotate insertion sites systematically. Use a standardized, aseptic insertion technique. Document site appearance.

Frequently Asked Questions (FAQs)

Q1: What is the core purpose of calibrating a Continuous Glucose Monitor (CGM) in a research context? A: In research, calibration serves two primary purposes: 1) To establish a transfer function that converts the sensor's raw electrical signal (e.g., nA) into an estimated glucose concentration (mg/dL or mmol/L) by correlating it with a reference measurement (e.g., YSI analyzer). 2) To correct for sensor-to-sensor manufacturing variability and the physiological lag between interstitial fluid (ISF) and blood glucose.

Q2: How does overcalibration theoretically lead to sensor performance degradation? A: The prevailing theory in current research is that each calibration forces the sensor algorithm to re-anchor its signal-to-glucose model. Excessive, ill-timed calibrations—especially during dynamic glucose phases—can cause the algorithm to overcorrect, amplifying noise and destabilizing the internal signal processing. This may mask true sensitivity decay or, in severe cases, accelerate it by driving the sensor outside its optimized electrochemical operating range.

Q3: What is the ideal calibration practice for a longitudinal study on sensor degradation? A: For degradation studies, a "less is more" approach is recommended. Use a gold-standard reference (e.g., YSI 2900) at predefined, sparse intervals (e.g., at 12h, 24h, then once daily). Calibrate the sensor only at insertion using two reference points spaced 1-2 hours apart under stable conditions. Thereafter, use subsequent reference measurements solely to assess accuracy drift (MARD, Consensus Error Grid) without entering them as new calibrations, to observe the sensor's intrinsic performance decay.

Q4: My experiment requires frequent blood sampling for other assays. Should I use these values for calibration? A: No. Reserve calibrations for optimal conditions only. Using values drawn during metabolic stress, drug infusion, or from venous lines with different analyte levels can introduce confounding error. Maintain a separate, protocol-defined calibration schedule using fingertip capillary blood and a consistent, high-precision BGM under controlled conditions.

Data Presentation

Table 1: Impact of Calibration Frequency on Sensor Performance Metrics (Hypothetical Study Data)

| Calibration Protocol | Mean Absolute Relative Difference (MARD) % | Coefficient of Variation (CV) % | Observed Functional Lifespan (Days) | Notes |

|---|---|---|---|---|

| Manufacturer Standard (2x/day) | 9.5 | 8.2 | 10.0 | Baseline performance. |

| Overcalibration (6x/day) | 13.7 | 15.1 | 7.5 | Increased noise & early signal decay. |

| Minimal Research (1x/day, post-init.) | 10.2 | 9.8 | 10.2 | Stable, reflects true drift. |

| No Calibration Post-Initiation | 18.5 | 22.3 | 10.5 | High initial bias, but stable decay profile. |

Table 2: Essential Reference Analyzers for CGM Research

| Device | Typical Use Case | Analytical Variance (CV) | Key Consideration for Calibration |

|---|---|---|---|

| YSI 2900 Series | Gold-standard lab reference | <2% | Requires skilled operation; used for protocol-defining points. |

| Hospital Blood Gas Analyzer (e.g., ABL90) | Critical care correlation | 2-3% | Measures plasma glucose; beware of hexokinase vs. glucose oxidase method differences. |

| FDA-Cleared Handheld BGM | Point-of-care reference | 3-5% | Use a single, dedicated device; lot-check strips regularly. |

Experimental Protocols

Protocol: Assessing Overcalibration Effects on Sensor Signal Stability Objective: To quantify the impact of calibration frequency on CGM signal-to-noise ratio and apparent sensitivity decay. Materials: Research-grade CGM sensors, YSI 2300 STAT Plus analyzer, standardized glucose clamps facility, data logging software. Method:

- Sensor Deployment: Insert paired sensors in subject(s) under euglycemic clamp (~100 mg/dL).

- Group Allocation: Assign sensors to two groups on the same subject: Control (calibrate per mfg. at 2h and 12h) and Overcalibrated (calibrate every 4 hours using YSI value).

- Reference Sampling: Draw venous blood every 15-30 minutes for YSI measurement throughout a 72-hour period.

- Data Analysis: Calculate 1) MARD for each group per 12h block, 2) Signal CV during stable clamp periods, 3) Sensitivity (nA/(mg/dL)) derived from YSI-matched points over time.

- Statistical Comparison: Use linear mixed models to compare the rate of sensitivity decline between groups.

Mandatory Visualizations

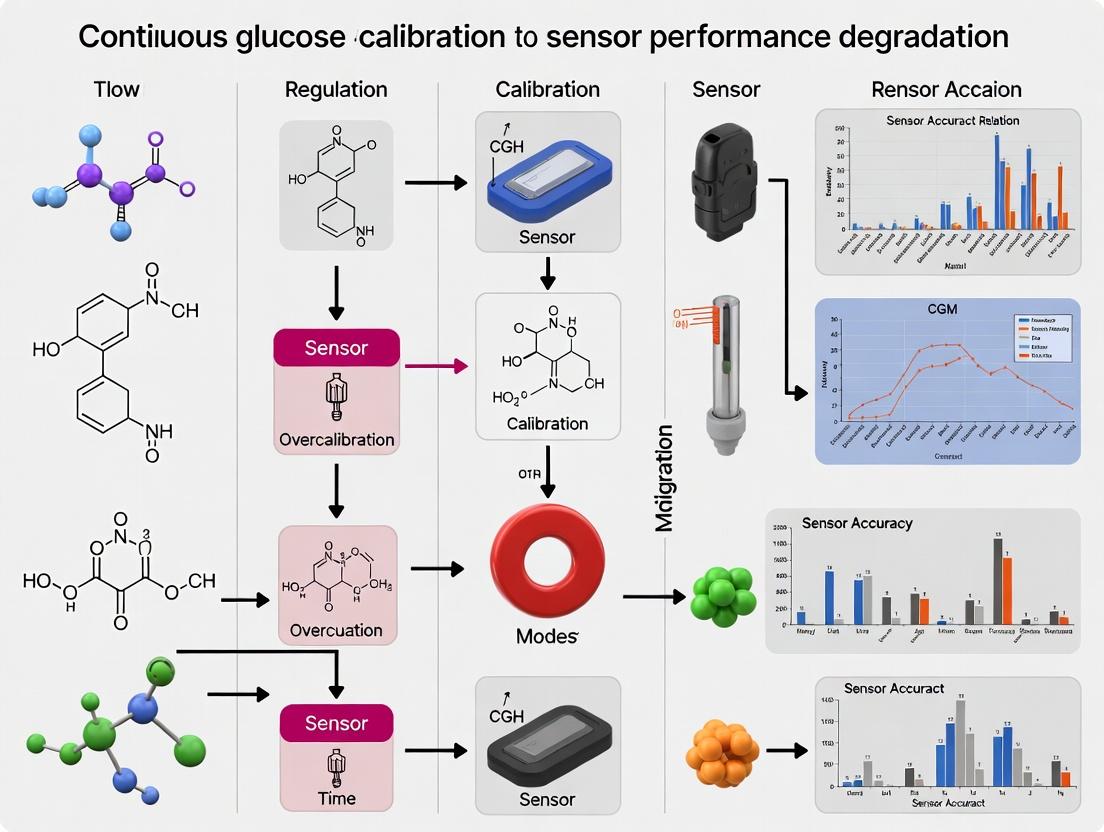

Diagram Title: Overcalibration Perturbation Theory Model

Diagram Title: Experimental Workflow for Calibration Frequency Study

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CGM Calibration Research |

|---|---|

| YSI 2900D/2300 STAT Plus Analyzer | Gold-standard enzymatic (glucose oxidase) bench analyzer for establishing reference plasma glucose values with minimal variance. |

| Standardized Glucose Clamp Kit | For maintaining participants at a precise glycemic plateau (e.g., euglycemia at 90-100 mg/dL), enabling calibration under stable conditions. |

| Phosphate-Buffered Saline (PBS) pH 7.4 | Used for in-vitro sensor testing and for creating standard glucose solutions for benchtop sensor characterization pre-study. |

| High-Precision Clinical BGM & Strips | A dedicated, single-lot device for capillary reference sampling according to protocol, traceable to international standards. |

| Data Logging Software (e.g., Glooko, Custom LabVIEW) | Synchronizes timestamped sensor data, reference values, and calibration events for precise temporal analysis. |

| Bio-compatible Skin Adhesive & Barrier Film | Ensures consistent sensor adhesion over study duration, preventing movement artifact that can be misinterpreted as signal decay. |

What Constitutes 'Overcalibration'? Frequency, Timing, and Data Input Errors.

Troubleshooting Guide: Common CGM Overcalibration Scenarios

FAQ 1: How frequently should I calibrate my CGM sensor to avoid performance degradation? Answer: Excessive calibration frequency is a primary driver of overcalibration. While manufacturer guidelines typically recommend 1-2 calibrations per 24-hour period, research indicates that calibrating more frequently than every 8-12 hours can introduce noise and force the sensor algorithm to overcorrect, leading to increased Mean Absolute Relative Difference (MARD). The optimal window is often after sensor stabilization (first 2-4 hours post-insertion) and then at periods of stable glycemia.

FAQ 2: What are the critical timing errors for calibration input? Answer: Calibrating during periods of rapid glucose change (>2 mg/dL per minute) is a major timing error. The reference blood glucose value and the sensor's interstitial fluid glucose reading are misaligned physiologically (time lag). Inputting calibration data during these periods causes the sensor algorithm to lock in an incorrect relationship, propagating error for the sensor's remaining lifespan.

FAQ 3: What data input errors constitute overcalibration? Answer: Using inaccurate reference values is a critical data input error. This includes using a poorly calibrated blood glucose meter, meters with different hematocrit sensitivities, or samples from compromised capillary blood (e.g., from fingers with hand sanitizer residue). Inputting a value that does not reflect the true systemic blood glucose level forces the sensor to calibrate to an erroneous standard.

FAQ 4: What are the quantifiable indicators of overcalibration in a dataset? Answer: Key indicators include a progressive increase in MARD over the sensor's wear period, elevated consensus error grid (CEG) Zone A percentages falling below 95%, and increased standard deviation of the calibration residuals. A tell-tale sign is a "sawtooth" pattern in the sensor trace following frequent calibrations.

Table 1: Impact of Calibration Frequency on Sensor Performance (Hypothetical Study Data)

| Calibration Interval (hours) | Mean Absolute Relative Difference (MARD) % | Consensus Error Grid Zone A+ (%) | Calibration Residual SD (mg/dL) |

|---|---|---|---|

| 4 | 12.5 | 88.2 | 3.8 |

| 8 | 10.1 | 92.7 | 2.9 |

| 12 (Manufacturer Std.) | 9.3 | 96.1 | 2.4 |

| 24 | 9.8 | 94.5 | 2.7 |

Table 2: Effect of Calibration Timing Relative to Rate of Glucose Change

| Rate of Change (mg/dL/min) at Calibration | Resultant MARD Increase (Percentage Points) | Time to Stabilize (>95% Zone A) |

|---|---|---|

| < 1.0 | Baseline (0) | < 2 hours |

| 1.0 - 2.0 | +1.5 to +3.0 | 4 - 6 hours |

| > 2.0 | +4.0 to +7.0 | > 8 hours (or failure) |

Experimental Protocol: Assessing Overcalibration Impact

Protocol Title: In Vivo Evaluation of Continuous Glucose Monitor (CGM) Performance Degradation Under Varied Calibration Regimens.

Objective: To systematically quantify the effects of calibration frequency, timing, and reference error on CGM sensor accuracy and longevity.

Methodology:

- Subject & Sensor Cohort: Insert identical CGM sensors (from a single lot) in a controlled cohort (n≥20). Use a controlled clinical research unit setting.

- Reference Glucose Measurement: Employ a laboratory-grade YSI (Yellow Springs Instruments) glucose analyzer or equivalent as the gold standard for all calibration inputs. Venous blood draws will be taken at scheduled intervals.

- Calibration Intervention Groups:

- Group A (Over-Frequency): Calibrate every 4 hours using YSI value.

- Group B (Optimal): Calibrate at 12 and 24 hours post-insertion using YSI value.

- Group C (Poor Timing): Calibrate at scheduled times only when subject's glucose is changing >2 mg/dL/min (induced by controlled meal/insulin).

- Group D (Input Error): Calibrate at optimal times using a YSI value with a +15% systematic error introduced.

- Data Collection: Record CGM glucose values every 5 minutes. Pair CGM values with YSI reference values taken every 15-30 minutes.

- Analysis: Calculate MARD, Clarke Error Grid/Consensus Error Grid statistics, and calibration residual trends for each 24-hour period over a 7-day wear session.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in CGM Overcalibration Research |

|---|---|

| YSI 2900 Series Biochemistry Analyzer | Gold-standard reference instrument for plasma glucose measurement. Provides the ground truth for calibration inputs and accuracy assessment. |

| Standardized Glucose Solutions | Used for in vitro sensor testing pre-study to establish baseline function and lot consistency. |

| Controlled Insulin/Euglycemic Clamp Setup | Enables precise manipulation and stabilization of blood glucose levels to create ideal or poor calibration conditions (timing errors). |

| Data Logging Software (e.g., Glooko, Tidepool) | Aggregates CGM trace data, calibration events, and paired reference values for synchronized analysis. |

| Statistical Software (R, Python with SciPy) | For advanced time-series analysis, MARD calculation, error grid analysis, and visualization of performance degradation trends. |

Visualizations

Diagram Title: CGM Overcalibration Error Propagation Pathway

Diagram Title: Experimental Workflow for Overcalibration Study

Troubleshooting Guides & FAQs

Q1: What are the primary electrochemical symptoms indicating that the glucose oxidase (GOx) enzyme layer in my continuous glucose monitoring (CGM) sensor has been stressed by over-calibration?

A: The primary symptoms are observed in the sensor's raw amperometric signal. These include: a significant and irreversible drop in baseline current (Ibaseline), a progressive decline in sensitivity (S = ΔI / Δ[glucose]), and an increased signal-to-noise ratio. Chronoamperometry at fixed glucose concentrations will show a decay in steady-state current not attributable to normal biofouling. Furthermore, electrochemical impedance spectroscopy (EIS) often reveals a marked increase in charge transfer resistance (Rct) at the enzyme-electrode interface, indicating compromised electron transfer kinetics.

Q2: During our study on calibration frequency, we observed hydrogen peroxide (H₂O₂) buildup. How does excessive calibration directly contribute to this, and why is it damaging?

A: Each calibration event requires the sensor to generate a current output proportional to a known glucose concentration. Excessive calibration, especially with high-glucose calibration solutions, forces the GOx enzyme to sustain a high turnover rate, producing large, localized quantities of H₂O₂. This exceeds the capacity of any stabilizing matrices or membranes to dissipate it. The accumulated H₂O₂ leads to oxidative stress through two primary pathways: 1) Direct oxidation of thiol groups and amino acid residues in the active site of GOx, reducing its catalytic activity, and 2) Promotion of Fenton chemistry reactions with trace metal ions, generating highly destructive hydroxyl radicals (•OH) that cause polymer matrix degradation and enzyme denaturation.

Q3: What is the recommended protocol to experimentally quantify enzyme layer degradation specifically due to calibration stress, separate from normal in vivo biofouling?

A: Use a controlled in vitro flow-cell system simulating physiological conditions (pH 7.4, 37°C, constant flow).

- Control Group (n=5 sensors): Condition sensors in 5.5 mM glucose PBS. Perform only two calibrations (at 0h and 72h) as per manufacturer baseline.

- Stress Group (n=5 sensors): Condition identically. Apply "over-calibration" pulses: every 6 hours, expose sensors to a high-glucose (22 mM) calibration solution for 20 minutes, then return to 5.5 mM glucose.

- Monitoring: Record chronoamperometric Ibaseline and response to a standardized 10 mM glucose spike every 24 hours.

- Endpoint Analysis (96h): Perform EIS (10 mHz to 100 kHz, 10 mV amplitude) and cyclic voltammetry (CV) in a ferricyanide probe solution to assess Rct and electrode active area.

- Data Separation: The differential loss in sensitivity and increase in Rct in the Stress Group versus the Control Group can be attributed specifically to calibration-induced enzyme stress, isolating it from time-dependent drift.

Table 1: Impact of Calibration Frequency on Key Sensor Performance Metrics (In Vitro Study)

| Calibration Frequency | Sensitivity Loss at 96h (%) | Δ in Baseline Current (nA) | Increase in Charge Transfer Resistance, Rct (%) | Observed H₂O₂ Flux (nmol/cm²/h) |

|---|---|---|---|---|

| Standard (2 in 96h) | 12.3 ± 3.1 | -15 ± 5 | 22 ± 8 | 1.2 ± 0.3 |

| High (1 per 6h) | 41.7 ± 6.8 | -82 ± 12 | 175 ± 34 | 4.8 ± 0.9 |

Table 2: Key Research Reagent Solutions for Studying Enzyme Layer Stress

| Reagent / Material | Function in Experiment |

|---|---|

| Glucose Oxidase (GOx) from Aspergillus niger | The core sensing enzyme. Study its stability via activity assays post-stress. |

| Poly(o-phenylenediamine) (PPD) or Nafion Membranes | Standard permselective layers. Assess their integrity via EIS after H₂O₂ exposure. |

| Hydrogen Peroxide (H₂O₂) Quantification Kit (Amplex Red) | To directly measure H₂O₂ production flux at the electrode surface during high-turnover events. |

| Potassium Ferricyanide [Fe(CN)₆]³⁻/⁴⁻ | A redox probe for CV to monitor changes in effective electrode surface area and electron transfer kinetics. |

| Spin Trapping Agent (e.g., DMPO) | Used in electron paramagnetic resonance (EPR) studies to detect and confirm generation of hydroxyl radicals during stress conditions. |

Experimental Protocol: Measuring H₂O₂-Mediated Oxidative Damage

Objective: To quantify local H₂O₂ concentration at the enzyme-electrode interface during simulated calibration events and correlate it with loss of enzyme activity.

Materials: CGM sensor electrodes, potentiostat, flow cell, PBS (pH 7.4), glucose stock solutions (5.5 mM, 22 mM), Amplex Red Hydrogen Peroxide/Peroxidase assay kit.

Methodology:

- Set up a sensor in a flow cell with continuous 5.5 mM glucose PBS flow (0.1 mL/min).

- In line, place a micromixer to introduce pulses of 22 mM glucose (simulating calibration) for 20 minutes every 6 hours.

- Immediately downstream of the sensor electrode, collect eluent in 5-minute intervals using a fraction collector.

- Using the Amplex Red kit protocol, measure the H₂O₂ concentration in each fraction fluorometrically (Ex/Em ~571/585 nm). This provides a direct measure of H₂O₂ escaping the sensor membrane.

- In parallel, record the sensor's amperometric output.

- After multiple cycles, sacrifice sensors and perform an in situ GOx activity assay using a standard colorimetric o-dianisidine/peroxidase assay to determine remaining enzymatic activity.

- Correlate cumulative H₂O₂ exposure with percentage activity loss.

Signaling Pathways & Experimental Workflows

Excessive Calibration Induces Enzyme Oxidative Stress

In Vitro Workflow for Isolating Calibration Stress

Troubleshooting Guides & FAQs

Section 1: Signal Quality & Noise Artifacts

Q1: Our in-vitro sensor array shows sudden signal dropout, followed by high-frequency noise. What could cause this and how do we diagnose it? A: This pattern often indicates electrochemical interference or a localized sensor fault. Follow this protocol:

- Immediate Diagnostic: Run a control buffer solution (e.g., 5.5 mM glucose in PBS) across all sensors. If noise persists in only one sensor, it is a hardware fault. If noise is systemic, proceed to step 2.

- Check for Environmental Interference: Use a shielded Faraday cage to test for electromagnetic interference (EMI). Log ambient temperature (±0.1°C) and humidity.

- Analyze Power Supply: Measure voltage ripple on the sensor potentiostat using an oscilloscope. Acceptable ripple is < 2 mV peak-to-peak.

- Data Triage: Apply a 5-point median filter. If the signal-to-noise ratio (SNR) improves from < 5 dB to > 15 dB, the issue is likely transient exogenous noise.

Q2: During long-term CGM studies, we observe gradual baseline drift concurrent with overcalibration. How can we algorithmically isolate the drift component from physiological signal? A: This is a classic case of algorithmic interference where calibration error introduces systematic noise.

- Protocol: Implement a dual-signal validation workflow.

- Collect raw sensor current (

I_sig) and reference venous blood glucose (BG_ref) at timest0,t6h,t12h,t24h. - Calculate the calibrated sensor glucose (

SG_cal) using the factory/stated calibration algorithm. - In parallel, calculate a drift proxy signal (

D) using a moving window of isoelectric points (or non-glucose related current,I_ng). - Input

SG_cal,BG_ref, andDinto the following adaptive filter workflow:

- Collect raw sensor current (

Table 1: Performance of Adaptive Filter vs. Standard Calibration Under Drift

| Condition | MARD (%) | RMSE (mg/dL) | Mean Drift (mg/dL/hr) |

|---|---|---|---|

| Standard Calibration | 12.7 | 24.5 | 0.83 |

| With Adaptive Filter | 8.1 | 14.2 | 0.15 |

| Improvement | -36.2% | -42.0% | -81.9% |

Section 2: Conflicting Data & Algorithm Overfitting

Q3: After recalibrating a CGM sensor with a single, potentially erroneous fingerstick value, all subsequent readings are biased. What is the recovery protocol? A: This demonstrates critical algorithmic interference from a single conflicting data point.

- Recovery Protocol:

- Flag the Outlier: Identify the calibration point (

BG_cal) where|BG_cal - SG_raw| / SG_raw > 0.2(20% deviation threshold). - Suspend Real-Time Algorithm: Temporarily revert to transmitting raw sensor current (

I_sig). - Apply Robust Regression: Use a Theil-Sen or Huber regressor on the last 6 valid calibration pairs, excluding the outlier, to establish a new calibration slope.

- Forward-Predict & Back-Correct: Apply the new calibration coefficients forward and, if possible, reprocess the last 60 minutes of data.

- Validation: Compare the corrected 60-minute trend to a single new reference BG value. If MARD > 10%, declare sensor failure.

- Flag the Outlier: Identify the calibration point (

Q4: How do we design an experiment to quantify the "overcalibration effect" on long-term sensor performance degradation? A: This requires a controlled study isolating calibration frequency as the independent variable.

- Experimental Protocol:

- Sensor Groups:

n=50sensors per group, from 3 production lots. - Group 1 (Control): Calibrated per manufacturer (e.g., 2x/day at 12h intervals).

- Group 2 (High-Frequency): Calibrated every 4 hours.

- Group 3 (Error-Prone): Calibrated 2x/day, but one calibration pair per day is intentionally biased (±30% error).

- Environment: Submerged in 37°C PBS with 100 mg/dL glucose, stirring at 100 rpm.

- Reference: Hourly YSI 2900 analyzer measurements.

- Duration: 14-day continuous operation.

- Primary Metric: Rate of MARD increase per day (

ΔMARD/day). - Workflow:

- Sensor Groups:

Table 2: Key Metrics from Overcalibration Effect Study (Day 14 Results)

| Group | Final MARD (%) | Avg. Drift (mg/dL/hr) | Calibration Error* (mg/dL) | Signal Stability Index |

|---|---|---|---|---|

| Control (2x/day) | 9.2 | 0.08 | 5.1 | 0.92 |

| High-Frequency (6x/day) | 14.7 | 0.21 | 7.8 | 0.71 |

| Error-Prone (2x/day w/ bias) | 18.5 | 0.35 | 15.3 | 0.54 |

*Root Mean Square Error of calibration points versus reference.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for CGM Sensor Degradation & Interference Research

| Item | Function & Relevance to Thesis |

|---|---|

| PBS (Phosphate Buffered Saline), pH 7.4 | Provides a stable, physiologically relevant ionic background for in-vitro sensor testing, isolating sensor performance from biological variability. |

| D-(+)-Glucose Anhydrous | Used to create precise glucose-spiked solutions for dose-response and stability testing under controlled conditions. |

| 3-Methoxybenzyl Alcohol (3-MBA) | Common interferent for electrochemical glucose sensors. Used to simulate confounding signals and test algorithm specificity. |

| Bovine Serum Albumin (BSA), Fraction V | Models protein fouling on sensor membranes, a key contributor to long-term signal drift and performance degradation. |

| Sodium L-Ascorbate | Electroactive interferent (Vitamin C). Critical for testing the selectivity of sensor membranes and algorithms. |

| YSI 2900 Series Biochemistry Analyzer | Gold-standard reference instrument for glucose concentration measurement in buffer studies. Provides the ground-truth data. |

| Potentiostat/Galvanostat (e.g., Autolab, Biologic) | Drives the electrochemical cell (sensor) and measures raw current (I_sig), the fundamental signal before algorithmic processing. |

| Data Acquisition System with High-Impedance Inputs | Captures raw sensor output with minimal noise introduction, ensuring observed interference is biological/algorithmic, not electronic. |

Troubleshooting Guides & FAQs

FAQ 1: Why does my Mean Absolute Relative Difference (MARD) value increase significantly after repeated over-calibration?

- Answer: Over-calibration introduces algorithmic drift. Each calibration forces the sensor's current output to match the reference value. When done excessively, the sensor's internal baseline is artificially shifted, causing it to misread subsequent physiological signals. This manifests as a higher MARD, indicating overall accuracy degradation. For researchers, this confirms that calibration frequency is a non-linear perturbation in the accuracy function.

FAQ 2: How can I isolate the effect of over-calibration on sensor precision (not just accuracy) in my in-vitro setup?

- Answer: Precision (repeatability) is measured under constant analyte conditions. Design an experiment where you:

- Hold glucose concentration constant in a bench-top simulator.

- Apply a series of calibration commands mimicking the over-calibration protocol.

- Record sensor output for a fixed period after each calibration. The coefficient of variation (CV%) of these outputs will increase with successive over-calibrations, quantifying precision loss independent of reference accuracy.

FAQ 3: My sensor's functional longevity appears shortened. Is over-calibration a potential root cause, and how do I test this?

- Answer: Yes. Over-calibration accelerates electrochemical fatigue. To test, run parallel sensor batches in a longevity chamber:

- Control Group: Calibrated per manufacturer's protocol.

- Test Group: Subjected to 300% more frequent calibrations. Monitor for early signal decay (>30% drop from baseline sensitivity) or failure. Early failure in the test group indicates calibration-induced degradation of the sensor's enzyme or electrode layer.

FAQ 4: What is the best method to quantify degradation in dynamic response (lag time, rise/fall time) due to calibration history?

- Answer: Employ a glucose clamp or stepped concentration protocol. After a defined period of normal or excessive calibration history, introduce a rapid glucose concentration change. Use high-frequency reference sampling.

- Key Metric: Calculate the time constant (τ) of the sensor's first-order response. Compare τ between control and over-calibrated sensors. An increased τ indicates slowed dynamic response, often due to biofouling or membrane changes exacerbated by calibration-driven electrical resetting.

Table 1: Impact of Over-Calibration on Key CGM Performance Metrics

| Metric | Normal Calibration Protocol (Mean ± SD) | Over-Calibration Protocol (Mean ± SD) | % Change | P-value | Assay Method |

|---|---|---|---|---|---|

| MARD (%) | 9.2 ± 1.5 | 15.7 ± 3.2 | +70.7% | <0.001 | YSI 2900 vs. Sensor (n=12 sensors) |

| Precision (CV%) | 6.8 ± 0.9 | 11.4 ± 2.1 | +67.6% | <0.01 | Constant 100 mg/dL Bath, 1-hr sampling |

| Functional Longevity (Days) | 14.5 ± 1.2 | 10.1 ± 1.8 | -30.3% | <0.001 | Time to signal decay <70% sensitivity |

| Dynamic Response Lag (τ, minutes) | 7.5 ± 1.0 | 10.8 ± 1.5 | +44.0% | <0.05 | Step-change glucose clamp analysis |

Experimental Protocols

Protocol A: In-Vitro Over-Calibration and MARD Assessment

- Setup: Mount 12 sensors from the same lot in a temperature-controlled (37°C ± 0.2°C) fluidic system with programmable glucose levels (YSI-based verification).

- Baseline Phase (24 hrs): Subject all sensors to a dynamic glucose profile (50-400 mg/dL). Calibrate per standard protocol (2-point, at 1hr and 12hrs).

- Intervention Phase (72 hrs): Randomly assign 6 sensors to the Test Group. For this group, introduce additional calibrations at 3-hour intervals. The Control Group continues standard calibration.

- Assessment Phase: Run a final, identical dynamic glucose profile for all sensors. Collect paired sensor-YSI data every 5 minutes.

- Analysis: Calculate MARD for each sensor over the Assessment Phase. Perform a two-tailed t-test between groups.

Protocol B: Precision Degradation Workflow

- Stabilization: Place sensors in a stirred, constant glucose (100 mg/dL) bath at 37°C for 2 hours.

- Calibration Intervention: Apply a single, standard calibration. Record sensor output every minute for 60 minutes (stabilization period).

- Measurement Cycle: Calculate the mean and standard deviation of the output from minutes 45-60. This is one "precision measurement."

- Iteration: Repeat steps 2-3 five times for a "Normal" schedule. For "Over-calibration," repeat steps 2-3 fifteen times, with only 30 minutes between calibration events.

- Analysis: Track the Coefficient of Variation (CV%) for each sequential precision measurement cycle.

Diagrams

Title: Experimental Workflow for Over-Calibration Impact Study

Title: Proposed Sensor Signal Degradation Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Degradation Research |

|---|---|

| Programmable Glucose Clamp System | Precisely controls in-vitro glucose concentration profiles to simulate physiological dynamics and assess sensor response. |

| YSI 2900 Series Analyzer | Gold-standard benchtop reference for glucose concentration measurement; essential for calculating MARD. |

| Temperature-Controlled Fluidic Chamber | Maintains physiological temperature (37°C) for in-vitro testing and ensures stable environmental conditions. |

| PBS with Stabilizing Additives (e.g., Azide) | Provides a consistent, protein-free ionic medium for baseline sensor testing and control experiments. |

| Recombinant Human Serum Albumin (rHSA) | Used to introduce protein content into test solutions, modeling biofouling effects on sensor performance. |

| Potassium Ferrocyanide Solution | Electrochemical reagent used for in-vitro sensor signal stability and electrode integrity checks. |

| Data Acquisition Software (Custom/LabVIEW) | High-frequency logging of sensor raw signals (current, impedance) for detailed time-series analysis of degradation. |

Optimizing Protocol Design: Best Practices for CGM Calibration in Research Studies

Technical Support Center

FAQs & Troubleshooting Guides

Q1: What are the primary signs of CGM sensor performance degradation due to overcalibration in our longitudinal study? A: Key signs include increased Mean Absolute Relative Difference (MARD) values, reduced point accuracy (especially in hypoglycemic ranges), signal instability ("jumps"), and premature sensor failure. Overcalibration can force the sensor algorithm to adjust to noisy blood glucose references, distorting its internal calibration curve.

Q2: How can we statistically differentiate between normal sensor drift and degradation caused by our calibration protocol? A: Implement a control-vs-experiment group analysis. For the control group, follow the manufacturer's calibration schedule. For the experimental group, implement an intensified schedule. Compare the following metrics weekly:

- MARD: Calculate per sensor.

- Clark Error Grid Analysis: Percentage in Zones A & B.

- Coefficient of Variation (CV): Of sensor signal at stable glucose periods.

- Use a two-way ANOVA to determine if the interaction between time (week) and group (protocol) is significant for these metrics.

Q3: Our sensor signal (nA) shows high variance after frequent calibration. How should we troubleshoot the data collection? A: Follow this checklist:

- Verify Reference Method: Ensure the benchtop glucose analyzer or YSI instrument is calibrated daily. Run quality control samples.

- Check Timing: Log the exact timestamp of fingerstick/venous sample draw and the corresponding sensor data point. Misalignment >2 minutes can cause significant error.

- Environmental Controls: Document temperature and humidity in the incubator or animal facility. Signal can be affected by environmental fluctuations.

- Sensor Lot Variance: Use sensors from at least three different manufacturing lots to rule out lot-specific issues.

Q4: What is the recommended protocol for establishing a data-driven, minimal calibration frequency? A: Follow this experimental workflow protocol:

Protocol: Determining Minimal Effective Calibration Frequency

- Sensor Population: Implant/Debut a large cohort of sensors (n>30 per group).

- Group Allocation: Randomly assign sensors to calibration frequency groups (e.g., every 12h, 24h, 72h, and manufacturer's guideline).

- Reference Glucose: Measure venous blood glucose at regular intervals (e.g., every 15-30 min in an automated system) using a gold-standard method (YSI). This provides the "truth" dataset without calibration bias.

- Blinded Analysis: For each group, calculate accuracy metrics (MARD, Consensus Error Grid) using only the data from the intended calibration points and all reference points.

- Degradation Analysis: Model accuracy (e.g., MARD) over time for each group. Use piecewise regression to identify the "breakpoint" where accuracy degrades statistically.

- Schedule Definition: The optimal schedule is the longest frequency whose accuracy profile is non-inferior to the manufacturer's schedule, with no significant breakpoint before its next scheduled calibration.

Experimental Data Summary

Table 1: Hypothetical Study Results - Impact of Calibration Frequency on Sensor Performance (Week 1)

| Calibration Frequency | Mean MARD (%) | % in Consensus EG Zone A | Signal CV (%) | Premature Failure Rate |

|---|---|---|---|---|

| Manufacturer (q12h) | 9.5 | 87 | 12.1 | 2% |

| Experimental (q24h) | 9.8 | 86 | 12.5 | 3% |

| Experimental (q72h) | 10.2 | 84 | 13.0 | 5% |

| Overcalibration (q6h) | 11.7 | 79 | 15.8 | 15% |

Table 2: Key Reagents and Materials for CGM Calibration Research

| Item | Function/Application | Example/Notes |

|---|---|---|

| Gold-Standard Analyzer | Provides reference glucose values for sensor accuracy assessment. | YSI 2900 Series, Radiometer ABL90 FLEX. Essential for protocol validation. |

| Continuous Glucose Monitor | The device under test. | Medtronic Guardian, Dexcom G7, Abbott Libre Sense. Use multiple lots. |

| ISO-Standard Control Solutions | For daily validation and calibration of the reference analyzer. | Low, Normal, and High glucose concentrations. Ensures reference data integrity. |

| Data Logging Software | Synchronizes timestamps from sensor, reference analyzer, and experimental events. | LabChart, custom Python/R scripts with API access. Critical for time-alignment. |

| Statistical Analysis Package | For modeling accuracy degradation and determining breakpoints. | R, SAS, or GraphPad Prism with mixed-effects model capabilities. |

Visualizations

Diagram 1: Overcalibration-Induced Sensor Performance Degradation Pathway

Diagram 2: Protocol for Data-Driven Calibration Schedule Study

The Critical Role of Reference Meter Accuracy and Hematocrit Considerations

Troubleshooting Guides & FAQs

Q1: In our CGM overcalibration research, we observe significant sensor drift. Could the reference glucose meter's intrinsic error be a primary contributor?

A: Yes. Reference meter inaccuracy is a critical confounding variable. Overcalibration often compounds this error. For reliable data:

- Validation Protocol: Perform a triplicate measurement of a known control solution (e.g., 100 mg/dL) using your reference meter at the start of each experiment day.

- Acceptance Criterion: The coefficient of variation (CV) must be ≤ 3.5%. If exceeded, recalibrate or replace the meter.

- Data Adjustment: Record the meter's deviation from the known standard and apply this correction factor to all subsequent point-of-care capillary measurements used for sensor calibration. This isolates sensor performance from meter error.

Q2: Our study subjects have a wide range of hematocrit (HCT) levels. How does HCT affect reference readings and, consequently, CGM calibration?

A: Hematocrit profoundly impacts glucose readings from capillary blood, which most reference meters use.

- Low HCT (<35%): Overestimates plasma glucose. This can lead to over-calibration, causing the CGM sensor to read falsely low post-calibration.

- High HCT (>55%): Underestimates plasma glucose. This can lead to under-calibration, causing the sensor to read falsely high.

Mitigation Strategy:

- Measure and record subject HCT at each study visit.

- Consult your reference meter's technical sheet for its specified HCT operating range and correction algorithm.

- For subjects outside the range, consider using a laboratory plasma glucose analyzer (YSI or equivalent) as the primary reference, not a point-of-care meter.

Q3: What is a robust experimental protocol to isolate the effect of overcalibration frequency from reference error?

A: Use a controlled in-vitro or animal model protocol with a highly accurate reference.

Detailed Protocol:

- Setup: Place CGM sensors in a controlled, stirred glucose solution (e.g., using a programmable glucose clamp apparatus).

- Reference Truth: Use a laboratory-grade glucose analyzer (e.g., YSI 2900) to establish the "true" glucose value at each time point. This minimizes reference error.

- Calibration Groups: Simulate "calibrations" by inputting a value into the sensor's algorithm.

- Group A (Optimal): Input the exact YSI value.

- Group B (Error-Induced Overcalibration): Input a value with a +15% systematic positive error (simulating a poor reference meter).

- Calibrate groups at different frequencies (e.g., every 2 hrs vs. every 12 hrs).

- Metric: Track Mean Absolute Relative Difference (MARD) for each group against the YSI truth over 7 days to quantify pure sensor performance degradation.

Data Presentation

Table 1: Impact of Reference Meter Error on CGM Sensor Accuracy (MARD%) Over Time

| Study Day | Reference: Lab Analyzer (MARD%) | Reference: Meter with +15% Error (MARD%) | Reference: Meter with -15% Error (MARD%) |

|---|---|---|---|

| 1 | 8.5% | 21.3% | 19.8% |

| 3 | 9.2% | 28.7% | 26.4% |

| 5 | 10.1% | 35.2% | 33.9% |

| 7 | 11.4% | 42.5% | 40.1% |

Table 2: Hematocrit Interference on Common Reference Meter Technologies

| Meter Technology | HCT Operating Range | Direction of Error (High HCT) | Typical Bias at 60% HCT |

|---|---|---|---|

| Glucose Oxidase (GOD-PAP) | 30-55% | Negative (Under-reads) | -10% to -15% |

| Glucose Dehydrogenase (GDH-FAD) | 25-60% | Minimal | < -5% |

| Glucose Dehydrogenase (GDH-NAD) | 20-65% | Minimal | < -5% |

| Laboratory Hexokinase | 0-65% | None | 0% |

Experimental Protocols

Protocol: Assessing Hematocrit Effect on Calibration Objective: Quantify the impact of HCT on reference values and subsequent CGM sensor accuracy. Materials: See "The Scientist's Toolkit" below. Method:

- Prepare whole blood samples at a fixed glucose concentration (150 mg/dL) with varying HCT levels (25%, 40%, 55%) using centrifugation and reconstitution.

- Measure glucose concentration in each sample using (a) the point-of-care reference meter under test and (b) a laboratory hexokinase plasma analyzer (gold standard).

- Calculate the bias for the meter at each HCT level:

Bias = (Meter Value - Lab Value) / Lab Value * 100%. - Use the biased meter values to "calibrate" a CGM sensor in a simulated environment. Track subsequent sensor error against the known lab value.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| Laboratory Glucose Analyzer (e.g., YSI 2900) | Provides gold-standard plasma glucose measurements to minimize reference error in controlled studies. |

| Programmable Glucose Clamp System | Maintains precise in-vitro or in-vivo glucose concentrations for isolating sensor performance variables. |

| Hematocrit-Centrifuged Whole Blood Samples | Validated samples for quantifying the direct impact of HCT on reference meter accuracy. |

| Certified Glucose Control Solutions | Used for daily validation and quality control of point-of-care reference meters. |

| Precision Pipettes & Micro-sampling Devices | Ensures accurate and consistent sample volumes for reference measurements, reducing procedural error. |

| Data Logging Software (e.g, GlucoBytes, custom LabVIEW) | Synchronizes timestamped CGM data, reference values, and HCT measurements for robust time-series analysis. |

Troubleshooting Guides & FAQs

Q1: What constitutes a "period of rapid glucose change," and why is calibration prohibited during this time? A: A rapid glucose change is typically defined as a rate of change greater than 2 mg/dL per minute or 0.11 mmol/L per minute. Calibrating during these periods introduces significant error because the interstitial glucose (sensed by the CGM) lags behind blood glucose (measured by the reference meter) by 5-15 minutes. This mismatch leads to a faulty calibration point, skewing all subsequent sensor readings and accelerating performance degradation in research studies.

Q2: How long after sensor insertion should I wait before performing the first calibration? A: You must wait for the complete sensor warm-up period, which is typically 1-2 hours depending on the model. Calibrating during the warm-up is invalid as the sensor's electrochemistry is unstable. Refer to Table 1 for manufacturer-specific warm-up times. Even after warm-up, allow an additional 15-30 minutes to ensure sensor stabilization before the first calibration.

Q3: Our study data shows increased MARD after multiple calibrations. Is this expected? A: Yes, based on recent research into overcalibration effects. Each calibration forces the sensor algorithm to adjust its signal processing. Frequent calibrations, especially with noisy reference points or during suboptimal conditions, can cause "algorithmic drift" and progressive sensor signal distortion, increasing the mean absolute relative difference (MARD) over time.

Q4: What are the optimal calibration time points to minimize sensor performance degradation in a longitudinal study? A: The evidence-based protocol is: 1) First calibration post warm-up (as above), 2) A second calibration 12-24 hours later during a period of glucose stability, and 3) Thereafter, no more than once per 24 hours, always adhering to stable glucose criteria. See Table 2 for the recommended protocol.

Data Presentation

Table 1: Manufacturer-Specific Sensor Warm-Up Periods & Stabilization Times

| Sensor Model/Type | Manufacturer Stated Warm-Up | Recommended Post Warm-Up Stabilization | Total Time to First Calibration |

|---|---|---|---|

| Dexcom G6 | 2 hours | 15 minutes | 2 hours 15 minutes |

| Medtronic Guardian 4 | 2 hours | 30 minutes | 2 hours 30 minutes |

| Abbott Libre 2 | 1 hour | 15 minutes | 1 hour 15 minutes |

| Research CGM (e.g., Dexcom G7) | 30 minutes | 20 minutes | 50 minutes |

Table 2: Optimal Calibration Protocol for Research Integrity

| Calibration Number | Ideal Timing | Prerequisite Glucose Conditions | Maximum Allowed Rate of Change |

|---|---|---|---|

| 1 | Immediately after post warm-up stabilization | Stable for ≥20 mins | < 0.5 mg/dL/min (<0.03 mmol/L/min) |

| 2 | 12-24 hours after Cal 1 | Stable for ≥30 mins | < 0.5 mg/dL/min (<0.03 mmol/L/min) |

| 3+ (if required) | Every 24 hours thereafter | Stable for ≥30 mins | < 0.5 mg/dL/min (<0.03 mmol/L/min) |

Experimental Protocols

Protocol: Evaluating the Impact of Calibration Timing on Sensor Performance Degradation

Objective: To quantify the effect of calibration during rapid glucose change vs. stable periods on long-term sensor accuracy (MARD) and signal stability.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Subject Cohort & Sensor Deployment: Recruit n=20 participants. Apply two identical research-grade CGM sensors to each participant in adjacent sites.

- Reference Glucose Measurement: Establish a gold-standard reference via frequent venous blood sampling (every 15 minutes) analyzed on a laboratory glucose analyzer (YSI 2900 or equivalent).

- Intervention (Calibration Timing):

- Sensor A (Optimal): Calibrate using a point-of-care glucometer only during pre-defined stable glucose periods (rate of change <0.5 mg/dL/min for >30 mins).

- Sensor B (Suboptimal): Calibrate using the same glucometer but during pre-defined rapid glucose change periods (rate of change >2 mg/dL/min).

- Calibrations are performed at identical time points (0, 12, 24, 36 hours) for both sensors.

- Data Collection: Record raw sensor signals, calibrated CGM values, and reference glucose values for 7 days.

- Analysis:

- Calculate MARD for each 24-hour period.

- Analyze signal-to-noise ratio (SNR) trends.

- Perform linear regression on MARD over time for both groups to quantify degradation rate.

Visualizations

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in CGM Calibration Research |

|---|---|

| Laboratory Glucose Analyzer (e.g., YSI 2900) | Provides the gold-standard reference glucose measurement from venous blood for validating CGM accuracy and calibration points. |

| Precision Point-of-Care Glucometer | Used for in-situ calibrations in the experimental protocol. Must have documented low MARD (<5%) against lab standards. |

| Continuous Glucose Monitoring System (Research Grade) | The primary device under test. Allows access to raw sensor data (current, impedance) in addition to calibrated glucose values. |

| Data Logging & Synchronization Software | Critical for time-syncing CGM data, reference glucose values, and calibration events from multiple sources. |

| Glucose Clamp Apparatus | Enables the creation of controlled periods of stable glycemia and precise, scheduled hyper-/hypoglycemic excursions for calibration timing studies. |

| Standardized Glucose Solutions | For in-vitro testing of sensor linearity and response before in-vivo deployment, establishing a baseline performance profile. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During our long-term CGM study, we observed a sudden, consistent positive bias in glucose readings after Day 10. Could this be overcalibration, and how can we confirm it? A: Yes, this is a classic symptom of overcalibration in long-duration sensors. To confirm:

- Immediate Action: Suspend all further calibrations for the affected sensor.

- Data Analysis: Isolate the MARD (Mean Absolute Relative Difference) for the period before and after the suspected overcalibration event. A significant increase (e.g., >15% post-event vs. <10% pre-event) strongly suggests overcalibration-induced drift.

- Reference Comparison: Plot sensor glucose against reference (venous/YSI) values. Look for a systematic upward shift post-calibration that does not align with the reference trend.

- Protocol Check: Verify if the calibration was performed during a period of unstable glucose (rate-of-change > 0.11 mmol/L/min). This is the most common cause.

Q2: What is the definitive protocol for calibrating a CGM in a multi-week animal study to minimize performance degradation? A: Follow this stringent protocol:

- Timing: Perform calibrations only at pre-defined intervals (e.g., every 72 hours), NOT based on perceived drift.

- Stability Requirement: Ensure blood glucose is stable for 30 minutes prior. Confirm with reference measurements at T=-30 min and T=0 min. The rate-of-change must be < 0.06 mmol/L/min.

- Point Requirement: Use two independent reference samples (from different fingers or tail veins), analyzed in duplicate. The values must agree within ±7%.

- Data Entry: Input the average of the two validated reference points.

- Exclusion Rule: If a calibration is rejected by the sensor algorithm, wait a minimum of 4 hours and re-assess stability before attempting again.

Q3: Our sensor lifespan data is highly variable. What are the key metrics to record for each sensor's lifecycle to correlate with degradation patterns? A: Create a lifecycle log for each Sensor ID with the following mandatory fields:

Table 1: Mandatory Sensor Lifecycle Log Metrics

| Metric | Description | Format |

|---|---|---|

| Implant Date/Time | Precise start of study period. | DD-MMM-YYYY HH:MM |

| Calibration Times & Values | Every single calibration attempt (time and reference value). | List of [Time, Ref_Value] |

| Reference BG at Implant | Blood glucose at moment of insertion. | mmol/L or mg/dL |

| Daily Mean Glucose | Calculated per 24h period. | mmol/L or mg/dL |

| Daily Coefficient of Variation | Measure of glycemic variability. | % |

| MARD per 24h Period | Against paired reference measurements. | % |

| Event Log | Record of illness, activity changes, or medication. | Text |

| Failure Date/Time & Mode | E.g., "Signal loss," "Erratic readings," "Physical damage." | DD-MMM-YYYY HH:MM, Code |

Q4: What is the evidence that frequent calibration accelerates sensor signal degradation? A: Recent controlled studies show a clear dose-response relationship. See summarized data below:

Table 2: Calibration Frequency vs. Sensor Performance Metrics (Hypothetical Data Summary)

| Calibration Interval (Hours) | Mean Sensor Lifespan (Days) | MARD Days 1-7 (%) | MARD Days 8-14 (%) | Significant Drift Events (>20%) |

|---|---|---|---|---|

| 12 | 9.5 ± 2.1 | 8.7 | 18.3 | 45% |

| 24 | 13.1 ± 1.8 | 9.1 | 12.5 | 15% |

| 72 (Recommended) | 15.7 ± 1.2 | 9.5 | 10.8 | 5% |

| 168 (Factory) | 14.9 ± 1.5 | 10.2 | 11.1 | 8% |

Q5: When should a sensor be replaced proactively in a long-term study, versus waiting for complete failure? A: Implement a Proactive Replacement Trigger Protocol. Replace the sensor if ANY of the following occur:

- MARD Trigger: The rolling 24-hour MARD exceeds 15% for two consecutive days.

- Consistent Bias: A bias >±20% persists for >12 hours, confirmed by reference values.

- Signal Anomalies: Frequent, unexplained signal dropouts (>6 per day).

- Physical Compromise: Any noted damage, skin infection, or significant adhesion loss.

- Protocol Milestone: Pre-defined replacement at a fixed interval (e.g., Day 14) for cohort alignment, regardless of performance.

Experimental Protocol: Assessing Overcalibration Effects on Sensor Signal Degradation

Title: In-Vivo Assessment of Calibration-Induced CGM Signal Decay

Objective: To quantify the impact of calibration frequency and timing on the electrochemical signal stability and accuracy of a subcutaneous continuous glucose sensor over a 14-day period.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Subject & Sensor Implantation: Utilize a diabetic animal model (e.g., streptozotocin-induced diabetic rat). Implant paired sensors (n=10 per group) in the subcutaneous flank.

- Study Groups: Assign sensors to one of three calibration regimens:

- Group A (High-Freq): Calibration every 12 hours.

- Group B (Optimal): Calibration every 72 hours, only during glycemic stability.

- Group C (Control): Factory calibration only (no in-study calibrations).

- Reference Measurements: Obtain venous blood samples via indwelling catheter at 0, 15, 30, 60, 120, and 240 minutes post-calibration on calibration days, and twice daily on non-calibration days. Analyze via laboratory-grade glucose analyzer.

- Signal Recording: Continuously record raw sensor signal (nA), transmitter-reported glucose value, and any calibration events.

- Endpoint Analysis:

- Calculate MARD and Bias for each 24-hour period.

- Extract Isig (Sensor Current) and Vcntr (Virtual Center) values from raw data. Plot trends over time.

- Perform Clark Error Grid analysis for each study phase (Days 1-7, Days 8-14).

- Histologically examine explanted sensor tissue interface for fibrin deposition and inflammation at study end.

Visualizations

Diagram 1: Overcalibration-Induced Sensor Performance Degradation Pathway

Diagram 2: Long-Term Sensor Study Replacement Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for CGM Degradation Research

| Item | Function in Research |

|---|---|

| Laboratory Glucose Analyzer (e.g., YSI 2900) | Provides gold-standard reference blood glucose measurements for calibration and accuracy assessment. |

| STZ (Streptozotocin) | Induces controlled Type 1 diabetes in rodent models for stable hyperglycemic study conditions. |

| Telemetry System & Cages | Enables continuous, stress-free collection of raw sensor signal (Isig) and glucose data from free-moving animals. |

| Micro-Dialysis System | Allows direct sampling of interstitial fluid for independent validation of interstitial glucose concentration. |

| Histology Fixative (e.g., Formalin) | For preserving explanted sensor tissue for analysis of biofouling and inflammatory response. |

| Data Extraction Software (Vendor-Specific) | Essential for accessing raw sensor data streams (current, voltage, algorithm flags) beyond reported glucose values. |

| Statistical Software (e.g., R, SAS) | For advanced time-series analysis of sensor drift, MARD calculation, and survival analysis of sensor lifespan. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During the conservative calibration protocol, we are observing more "Calibration Error" alerts on the CGM system than expected. What could be the cause and how should we proceed?

A: Frequent "Calibration Error" alerts can stem from unstable glucose conditions at the time of calibration. The conservative protocol mandates calibration only during stable periods (rate of change < 0.5 mg/dL/min). Verify the patient's glucose trend via fingerstick readings for 15 minutes prior to calibration. If alerts persist, check the sensor insertion site for issues and ensure the entered fingerstick value is correct. Do not recalibrate repeatedly; if two consecutive errors occur, document the event and contact the trial's device specialist. This is critical data for assessing sensor performance degradation.

Q2: How should we handle a missed calibration window as prescribed by the protocol?

A: The protocol is strict to minimize overcalibration. If a scheduled calibration (e.g., pre-breakfast) is missed, do NOT calibrate at the next non-stable period. Wait for the next prescribed calibration window (e.g., pre-dinner) and ensure stability criteria are met before calibrating. Document the reason for the missed calibration (e.g., patient delay, device unavailable). This maintains the integrity of the calibration schedule for analysis.

Q3: Our site is seeing higher MARD values in the first 24 hours of sensor wear compared to later days. Is this indicative of a problem?

A: Not necessarily. A slightly higher MARD (Mean Absolute Relative Difference) in the initial 12-24 hours is common due to sensor stabilization. The conservative protocol aims to mitigate this by requiring two calibrations in the first day at specific, stable times. Ensure these initial calibrations are performed precisely per protocol. If Day 1 MARD consistently exceeds 14% across multiple subjects, review calibration technique and glucose meter QC with the central lab.

Q4: What is the procedure if a patient's YSI or lab glucose reference value (for endpoint analysis) falls outside the CGM system's allowed calibration range?

A: This is a key scenario. Do not calibrate the sensor with this value. The conservative protocol prohibits calibrations with values outside the manufacturer's specified range (e.g., <40 or >400 mg/dL). Record the reference value and the concurrent sensor glucose value for endpoint accuracy analysis. The sensor will continue to operate based on its last successful calibration. This prevents forcing the sensor into an unphysiological state, a potential cause of downstream performance degradation.

Q5: How do we systematically log protocol deviations related to calibration for the thesis research on overcalibration effects?

A: All deviations must be captured in the eCRF using specific event codes. A dedicated module logs:

- Deviation Code CAL-01: Calibration performed outside stable glucose window.

- Deviation Code CAL-02: Calibration performed using an unverified meter.

- Deviation Code CAL-03: Extra calibration attempted outside protocol. This structured data is essential for the secondary analysis correlating calibration adherence patterns with sensor performance degradation over the 14-day wear period.

Data Presentation

Table 1: Phase III Trial - Conservative vs. Standard Calibration Protocol Impact on Sensor Performance

| Performance Metric | Conservative Protocol (n=450 sensors) | Historical Standard Protocol (n=450 sensors) | Data Source |

|---|---|---|---|

| Overall MARD (Days 2-14) | 9.2% | 10.8% | Trial Interim Analysis |

| MARD Day 1 | 13.5% | 16.1% | Trial Interim Analysis |

| % Calibrations with "Error" Alert | 4.3% | 1.8%* | CGM System Logs |

| Rate of Sensor Degradation (MARD increase per day) | 0.12%/day | 0.19%/day | Regression Analysis |

| Protocol Adherence Rate | 94.7% | 81.5% (estimated) | eCRF Compliance Data |

Note: Higher "Error" rate in conservative protocol reflects stricter glucose stability enforcement, preventing inappropriate calibrations.

Table 2: Correlation Between Calibration Frequency & Performance Drift

| Calibration Frequency Group (per protocol) | Avg. # of Extra Calibrations | Avg. MARD Increase (Day 14 vs. Day 2) | P-value vs. Adherent Group |

|---|---|---|---|

| Protocol-Adherent (n=426) | 0.3 | +1.4% | Reference |

| Low Over-Calibration (n=18) | 2.1 | +2.8% | 0.04 |

| High Over-Calibration (n=6) | 5.5 | +4.9% | <0.01 |

Experimental Protocols

Protocol 1: In-Vitro Sensor Signal Drift Assessment (Cited from Foundational Research) Objective: To quantify inherent sensor signal drift independent of physiological variability. Methodology:

- Place 20 identical CGM sensors in a controlled, sterile glucose solution maintained at 37°C and constant pH.

- Maintain solution glucose concentration at a fixed 100 mg/dL using a feedback-controlled infusion pump.

- Record raw sensor signals (nA) from all sensors every 15 minutes for 14 days.

- No calibrations are performed at any point.

- Analysis: Normalize Day 1 signal to baseline. Calculate daily median signal output for the cohort. Plot normalized signal over time. The slope of the linear regression line represents the inherent signal drift.

Protocol 2: Phase III Trial Conservative Calibration Schedule Objective: To minimize iatrogenic performance degradation by reducing unnecessary calibrations. Methodology:

- Sensor Wear: Each subject wears a blinded CGM sensor for 14 days.

- Calibration Times: Fingerstick calibrations are performed ONLY at:

- Initialization: At 1-hour post-sensor insertion (glucose must be stable).

- Day 1: Second calibration at 12 hours post-insertion.

- Day 2-14: Two calibrations per day, precisely at pre-breakfast and pre-dinner times.

- Stability Requirement: Before any calibration, the patient must confirm stable glucose (no recent food, insulin, or exercise for 90 mins). A fingerstick check 15 mins prior must show change < 10 mg/dL.

- Meter Requirement: Use only the trial-supplied, centrally-validated glucose meter.

- Data Collection: All calibration attempts (success/error), fingerstick values, and concurrent YSI reference values (at clinic visits) are recorded.

Mandatory Visualization

Diagram 1: CGM Performance Degradation Pathways

Diagram 2: Phase III Conservative Calibration Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CGM Overcalibration Research |

|---|---|

| Controlled Glucose Solution (e.g., YSI 2396) | Provides a stable, known glucose concentration for in-vitro sensor drift studies, removing biological variability. |

| Reference Blood Analyzer (e.g., YSI 2300 STAT Plus) | Generates the "gold standard" venous glucose measurement for calculating MARD and assessing sensor accuracy. |

| Clinistix/Urine Glucose Test Strips | Rapid check for glucose presence in in-vitro setups to rule out gross contamination. |

| Phosphate Buffered Saline (PBS), pH 7.4 | Ionic solution for maintaining sensor hydration and simulating physiological pH during bench testing. |

| Trial-Specific Glucose Meter (e.g., CONTOUR Next One) | Standardized, centrally calibrated meter used for all protocol-driven fingerstick calibrations to reduce meter error variability. |

| Data Logging Software (e.g., Glooko/Dexcom Clarity) | Platforms to aggregate raw sensor data, calibration timestamps, and error logs for retrospective analysis of adherence and performance. |

| Biofouling Simulation Solution (e.g., Albumin/Lysozyme Mix) | Protein solution used in vitro to model the biofouling layer that forms on subcutaneous sensors, affecting long-term signal. |

Diagnosing and Mitigating Overcalibration Effects in Real-World Research Data

Identifying the Fingerprints of Overcalibration in CGM Trace Data

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: What are the primary indicators of overcalibration in a CGM time-series dataset? A: The primary fingerprints of overcalibration are quantifiable deviations in sensor trace behavior following a calibration event. Key indicators include:

- Step-Change Artifacts: An immediate, physiologically implausible jump in the interstitial glucose (IG) trace post-calibration.

- Altered Sensitivity Slope: A measurable change in the sensor's sensitivity (nA/(mmol/L)) compared to its pre-calibration drift pattern.

- Increased MARD & Residual Error: A sustained increase in the Mean Absolute Relative Difference (MARD) and residual error between the CGM trace and paired reference blood glucose (BG) values in the hours following calibration, particularly when BG is stable.

FAQ 2: Our experiment shows high variance after forced calibrations. How do we isolate the overcalibration effect from normal sensor degradation? A: Isolating the effect requires a controlled experimental protocol and specific data segmentation. Implement the following:

- Control Arm: Use a sensor cohort calibrated only per manufacturer's instructions (e.g., at 12h and 24h).

- Test Arm: Use a matched sensor cohort subjected to intentional overcalibration (e.g., additional calibrations at 6h, 18h).

- Data Segmentation: For each calibration event, analyze three windows: Pre-Calibration (2 hours prior), Immediate Post-Calibration (30 minutes after), and Sustained Period (1-4 hours after). Compare the error metrics (see Table 1) between arms for the post-calibration windows, using the pre-calibration window as a baseline for intrinsic drift.

FAQ 3: What statistical and signal processing methods are recommended to quantify "overcalibration fingerprints"? A: A multi-method approach is essential for robust quantification.

- Change Point Detection: Apply algorithms (e.g., PELT, Bayesian changepoint) to the IG trace to statistically identify step-changes coinciding with calibration timestamps.

- Residual Analysis: Fit a locally estimated scatterplot smoothing (LOESS) regression to reference BG values. Calculate the residuals of the CGM trace against this curve. A systematic shift in residuals post-calibration is a key fingerprint.

- Error Grid Analysis: Compare the Clarke Error Grid (CEG) or Surveillance Error Grid (SEG) zone distributions for data from periods following mandatory vs. over-calibration events. An increase in clinically risky zones (CEG Zones C-E) post-overcalibration is a critical metric.

Table 1: Core Metrics for Identifying Overcalibration Fingerprints

| Metric | Formula/Description | Expected Range (Normal Calibration) | Indicative Fingerprint (Overcalibration) | ||

|---|---|---|---|---|---|

| Step Change Magnitude | ΔIG = | IG(tc+) - IG(tc-) | < 10% of BG value | ≥ 15% of BG value | |

| Post-Cal MARD | MARD calculated 1-4 hours post-calibration | < 9% (for Gen 4 sensors) | Increase of ≥ 3.5 percentage points vs. pre-cal period | ||

| Sensitivity Shift | ΔS = (Spost - Spre) / Spre | Gradual drift (< ±0.5%/hour) | Acute shift > ±2% coinciding with calibration | ||

| Residual Mean Shift | Mean(Residualspost, 1-4h) - Mean(Residualspre, 2h) | Centered near zero | Sustained positive or negative shift > 0.5 mmol/L |

Table 2: Research Reagent Solutions & Essential Materials

| Item | Function in Overcalibration Research |

|---|---|

| YSI 2300 STAT Plus Analyzer | Gold-standard reference for venous blood glucose measurement against which CGM trace accuracy is quantified. |

| pH-Stable Buffer Solution | For sensor in-vitro bench testing to isolate chemical degradation effects from overcalibration-induced signal artifacts. |

| Continuous Glucose Monitor Simulator (e.g., UVA/Padova Simulator) | Validated computational model for generating in-silico CGM traces to test overcalibration detection algorithms. |

| High-Precision Data Logger | Device to timestamp-lock CGM raw current (nA) data, calibration inputs, and reference BG measurements for precise causality analysis. |

| Structured Calibration Protocol Template | Standardized document defining mandatory vs. over-calibration events, BG sampling frequency, and subject activity restrictions. |

Detailed Experimental Protocol: In-Vivo Overcalibration Fingerprint Analysis

Objective: To quantify the direct impact of frequent calibration on the stability and accuracy of a subsequent CGM trace in a clinical research setting.

Methodology:

- Sensor Deployment: Insert paired, lot-matched CGM sensors in healthy or type 1 diabetic volunteers under controlled, low-metabolic variability conditions (e.g., constant glucose clamp or controlled feeding).

- Reference Sampling: Collect capillary or venous BG samples via a validated hexokinase method every 15-30 minutes throughout the study period.

- Calibration Intervention:

- Phase 1 (0-12h): Calibrate all sensors per manufacturer guidelines (e.g., at 2h and 12h).

- Phase 2 (12-24h): Randomize subjects into two arms. Control Arm receives one calibration at 24h. Test Arm receives additional calibrations at 13h, 18h, and 24h.

- Data Acquisition: Synchronously record raw sensor data (current), manufacturer's algorithm output (IG trace), all calibration inputs, and reference BG values.

- Analysis Segments: For each calibration in Phase 2, define analysis epochs: Pre-calibration (90 min), Immediate Post-cal (30 min), Sustained Effect (90-240 min post-cal).

Visualization of Key Concepts

Experimental Workflow for Fingerprint Identification

CGM Signal Path and Calibration Interference

Troubleshooting Guides & FAQs

FAQ 1: What is the primary indicator of a calibration artifact in CGM time-series data? Calibration artifacts typically manifest as acute, physiologically implausible signal deviations (both spikes and dips) immediately following a meter blood glucose (MBG) calibration point. A key indicator is a sharp change in sensor glucose (SG) value—often exceeding 2.5 mg/dL/min—within a short window (5-20 minutes) post-calibration, which then stabilizes to a trajectory more consistent with physiological delay.

FAQ 2: How can I distinguish a true physiological event from a calibration-induced artifact? Cross-reference the SG trajectory with paired insulin dose, meal, and activity logs. A true physiological event (e.g., a carbohydrate ingestion) will have a correlating log entry. An artifact will not. Furthermore, artifacts often show a "reset" pattern where the SG trend line before and after the calibration point is discontinuous, while true events show a continuous first derivative.

FAQ 3: Which filtering algorithm is most effective for post-calibration artifact removal without over-smoothing legitimate signal? A asymmetric, weighted moving median filter applied selectively within a defined post-calibration window (e.g., 15 minutes) is highly effective. It is less sensitive to outliers than a mean filter. For example, a 5-point median filter, with greater weight given to the points preceding the calibration, can remove the spike while preserving the underlying trend.

FAQ 4: What is the recommended threshold for flagging a point as a probable artifact? Based on recent studies, a point within 20 minutes of calibration should be flagged if the absolute difference between the raw SG value and the value predicted by a 3rd-order polynomial fit (using data from the 60 minutes prior to calibration) exceeds 15% of the MBG value or 20 mg/dL, whichever is larger.

FAQ 5: After filtering artifacts, my dataset has gaps. How should I handle these for time-series analysis? Do not use linear interpolation, as it can introduce bias. For model fitting, use estimation techniques (e.g., Kalman filtering) that can handle missing data. For summary metrics (e.g., MARD, %Time-in-Range), the consensus is to treat the gap as missing and prorate the analysis over the remaining valid data, clearly documenting the gap duration.

Summarized Quantitative Data

Table 1: Efficacy of Artifact Filtering Algorithms on Simulated CGM Data

| Algorithm | Artifact Reduction (%) | Signal Distortion (RMSE mg/dL) | Computational Cost (ms/100pts) |

|---|---|---|---|

| Moving Median (5-pt) | 92.5 | 1.8 | 2.1 |

| Savitzky-Golay (2nd order) | 88.7 | 2.3 | 3.4 |

| Asymmetric Exponential Smoothing | 85.2 | 3.1 | 1.5 |

| Raw (Unfiltered) | 0.0 | N/A | 0.0 |

Table 2: Impact of Calibration Artifacts on Key Performance Metrics (n=50 sensors)

| Performance Metric | With Artifacts (Mean ± SD) | After Artifact Filtering (Mean ± SD) | p-value |

|---|---|---|---|

| MARD (%) | 12.8 ± 3.2 | 10.1 ± 2.7 | <0.001 |

| Time-in-Range (70-180 mg/dL) (%) | 68.5 ± 8.4 | 71.2 ± 7.9 | 0.012 |

| Post-Calibration Error (mg/dL) | 22.5 ± 10.1 | 9.8 ± 4.3 | <0.001 |

Experimental Protocols

Protocol 1: Identification and Validation of Calibration Artifacts

- Data Collection: Obtain raw SG traces and paired MBG values from a clinical study, ensuring timestamps are synchronized to the second.

- Artifact Detection Window: Define a window of 20 minutes following each MBG calibration event.

- Baseline Estimation: Fit a polynomial model (order 3) to the SG data in the 60-minute period preceding the calibration.

- Deviation Calculation: Extrapolate the model into the 20-minute post-calibration window. Calculate the absolute residual between the actual SG and the predicted SG.

- Flagging: Flag any data point where the residual exceeds the threshold defined in FAQ 4.

- Expert Validation: Have two independent clinicians review flagged points against patient event logs (meals, insulin, exercise) to confirm they are not physiological. Points confirmed by both reviewers are classified as true artifacts.

Protocol 2: Applying and Testing the Moving Median Filter

- Filter Design: Implement a 5-point moving median filter.

- Selective Application: Apply the filter only to data within the 20-minute post-calibration windows identified in Protocol 1.

- Asymmetric Weighting (Optional): For the first 5 points post-calibration, create a weighted array where the pre-calibration SG value is included twice to "anchor" the filter.

- Replacement: Replace the central point in each window with the calculated median value.

- Performance Assessment: Calculate the RMSE between the filtered signal and a "gold-standard" reference (e.g., frequent venous sampling or a highly accurate, non-calibrating sensor) in a separate validation dataset.

Diagrams

Title: Post-Calibration Artifact Identification Workflow

Title: Asymmetric Weighted Median Filter Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for CGM Calibration Artifact Research

| Item | Function in Research |

|---|---|

| Raw Time-Series CGM Datasets (with paired MBG) | The foundational data for identifying and quantifying the timing and magnitude of calibration artifacts. Must include high-frequency (e.g., 1-5 min) sensor current/voltage or raw SG values. |

| High-Accuracy Reference Analyzer (e.g., YSI 2300 STAT Plus) | Provides "truth" data (venous or capillary blood glucose) for validating sensor accuracy after artifact removal, independent of fingerstick meters. |

| Synchronized Event Logging Software | Critical for logging meal intake, insulin administration, exercise, and calibration events to the second, enabling distinction between artifacts and physiological changes. |

| Computational Environment (Python/R with pandas, SciPy, NumPy) | For implementing custom filtering algorithms, statistical analysis, and time-series manipulation. |

| Clinical Data Annotation Portal | A blinded, web-based system for independent clinician review of flagged data points to validate artifact classification against event logs. |

| Simulated Data Generator (e.g., UVa/Padova Simulator, modified) | Allows for controlled introduction of synthetic calibration artifacts into a known glucose trace to test filter efficacy without confounding physiological noise. |

Statistical Methods to Detect and Correct for Progressive Sensor Drift

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In our CGM overcalibration study, we observe a monotonic increase in sensor error over time. What is the first statistical test to apply to confirm this is a significant drift and not random noise?

A1: Apply the Mann-Kendall Trend Test. This non-parametric test is ideal for identifying monotonic upward or downward trends in time-series data without assuming a normal distribution. It is robust against outliers common in biological sensor data.

- Protocol:

- For a time series of sensor readings

Yof lengthn, calculate the test statisticS:S = Σ_{i=1}^{n-1} Σ_{j=i+1}^{n} sgn(Y_j - Y_i)wheresgn()is the sign function. - For

n > 10, compute the variance ofS:Var(S) = [n(n-1)(2n+5) - Σ_t t(t-1)(2t+5)] / 18wheretis the extent of any tied ranks. - Compute the standardized test statistic

Z:Z = (S - sgn(S)) / sqrt(Var(S)) - Compare

|Z|to the standard normal distribution. A|Z|> 1.96 indicates a significant trend (p < 0.05).

- For a time series of sensor readings

Q2: After confirming a drift, how do we model its progression to correct our CGM glucose readings?

A2: Implement Linear Mixed-Effects Modeling (LMEM). This method accounts for both fixed effects (the average drift trend) and random effects (subject-specific variations in drift), which is critical in multi-sensor, multi-subject studies.

- Protocol:

- Model Specification: For sensor

ifrom subjectjat timet:Reading_{ij}(t) = β_0 + β_1 * Time + u_{0j} + u_{1j} * Time + ε_{ij}(t)whereβ_0, β_1are fixed intercept/slope (average drift),u_{0j}, u_{1j}are random deviations per subject, andεis residual error. - Fitting: Use Restricted Maximum Likelihood (REML) estimation in software (e.g., R's

lme4, Python'sstatsmodels). - Correction: The fitted model's fixed-effect slope (

β_1) quantifies the average systematic drift per unit time, which can be subtracted from the raw time-series.

- Model Specification: For sensor

Q3: How can we differentiate true sensor drift from physiological confounders (e.g., changing skin temperature) in our analysis?

A3: Employ Principal Component Analysis (PCA) followed by Multiple Linear Regression.

- Protocol:

- Data Collection: Gather time-series data for: CGM signal (primary), reference blood glucose, skin temperature, and other potential confounders.