Beyond the Noise: Avoiding Critical Errors in CGM Data Interpretation and Pattern Recognition for Drug Development

Continuous Glucose Monitoring (CGM) data is pivotal for metabolic drug development, yet common errors in its interpretation can lead to flawed trial endpoints and scientific conclusions.

Beyond the Noise: Avoiding Critical Errors in CGM Data Interpretation and Pattern Recognition for Drug Development

Abstract

Continuous Glucose Monitoring (CGM) data is pivotal for metabolic drug development, yet common errors in its interpretation can lead to flawed trial endpoints and scientific conclusions. This article addresses researchers, scientists, and drug development professionals by exploring the fundamental pitfalls in CGM signal analysis, detailing robust methodological frameworks for accurate pattern recognition, providing troubleshooting strategies for noisy or anomalous datasets, and establishing validation protocols against gold-standard measures. It provides a comprehensive guide to extracting reliable, actionable insights from CGM data while avoiding costly misinterpretations that can derail clinical research.

Decoding the Signal: Foundational Errors in CGM Data Interpretation for Researchers

Continuous Glucose Monitoring (CGM) data provides critical endpoints for metabolic research and therapeutic development. Correct interpretation of core metrics—Time in Range (TIR), Time Above Range (TAR), Time Below Range (TBR), and Glucose Variability (GV)—is essential. This technical support center addresses frequent misinterpretations within the context of CGM data interpretation errors and pattern recognition avoidance research.

Troubleshooting Guides & FAQs

Q1: In our drug trial, TIR improved significantly, but the study failed its primary endpoint. Are we miscalculating TIR? A: Likely. A common misapplication is using non-consensus ranges. For regulatory and most interventional studies, the standard ranges are:

- Hypoglycemia: <54 mg/dL (<3.0 mmol/L) for Level 2; <70 mg/dL (<3.9 mmol/L) for Level 1.

- Target Range: 70-180 mg/dL (3.9-10.0 mmol/L).

- Hyperglycemia: >180 mg/dL (>10.0 mmol/L) for Level 1; >250 mg/dL (>13.9 mmol/L) for Level 2. Using institutional or outdated ranges (e.g., 70-140 mg/dL) invalidates cross-trial comparisons. Protocol: Always validate CGM device configuration files to ensure the embedded alarm and range thresholds align with the 2019 International Consensus targets before study initiation.

Q2: We observe a reduced TAR but unchanged TBR. However, hypoglycemia events increased. How is this possible? A: This indicates a misinterpretation of TBR. TBR is a percentage of time, not an event count. A patient can have frequent, brief but severe hypoglycemic events without a high TBR if events are short. This highlights pattern recognition avoidance. Protocol: Always analyze TBR in conjunction with event-based metrics. For a 14-day CGM trace, count all episodes where glucose is <54 mg/dL for at least 15 minutes. A significant increase in event count, even with stable TBR, signals increased hypoglycemia risk.

Q3: Our compound shows a strong effect on mean glucose but minimal impact on Glucose Variability (GV). Is GV relevant? A: Yes. GV (e.g., Coefficient of Variation [CV], Standard Deviation [SD]) captures stability, which is mechanistically distinct from average glucose. A drug lowering mean glucose but increasing GV may induce dangerous glucose swings. A common error is reporting only SD, which is correlated with mean glucose. Protocol: Calculate both SD and CV (%CV = [SD/Mean Glucose] x 100). For clinical interpretation, target a %CV <36%. Analyze GV in stratified cohorts based on mean glucose to isolate true variability effects.

Q4: During sensor reconciliation, we notice significant gaps in data. How should we handle this for metric calculation? A: Data gaps >48 hours can skew weekly metrics. A major error is calculating metrics from insufficient data. Protocol: Adhere to the "14-day/70% rule." For a reliable 14-day assessment, require at least 10 days (70%) of continuous CGM data. For a 7-day assessment, require at least 5 days. Prune datasets that don't meet this criterion prior to aggregate analysis.

Table 1: Core CGM Metrics, Definitions, and Targets for Clinical Research

| Metric | Acronym | Standard Calculation | Consensus Clinical Target | Common Misapplication |

|---|---|---|---|---|

| Time in Range | TIR | % of readings 70-180 mg/dL | >70% for diabetes | Using non-consensus range boundaries (e.g., 70-140 mg/dL). |

| Time Above Range | TAR | % of readings >180 mg/dL (Level 1) & >250 mg/dL (Level 2) | <25% (Level 1), <5% (Level 2) | Reporting as a single value without stratification by level. |

| Time Below Range | TBR | % of readings <70 mg/dL (Level 1) & <54 mg/dL (Level 2) | <4% (Level 1), <1% (Level 2) | Interpreting as event count; ignoring duration & severity levels. |

| Glucose Variability | GV | %CV = (SD / Mean Glucose) x 100 | ≤36% | Reporting SD alone without regard to mean glucose (CV is preferred). |

| Mean Glucose | - | Average of all sensor readings | ~154 mg/dL (for A1C ~7%) | Relying solely on mean, ignoring distribution (TIR/TAR/TBR). |

Table 2: Minimum Data Requirements for Robust CGM Analysis

| Analysis Period | Minimum Required CGM Data | Maximum Allowable Gap | Key Supported Metrics |

|---|---|---|---|

| Short-term (24h) | 20 hours (83%) | 2 hours | Daily patterns, MAGE (if data density high) |

| Standard (7-day) | 5 days (70%) | 48 hours | TIR, TAR, TBR, CV, mean glucose |

| Regulatory (14-day) | 10 days (70%) | 48 hours | All primary & secondary endpoints for trials |

Experimental Protocol: Validating CGM Metrics in a Drug Intervention Study

Title: Protocol for a 12-Week Randomized Control Trial Assessing a Novel Therapeutic's Impact on CGM-Derived Endpoints. Objective: To evaluate the effect of Drug X vs. Placebo on glucose control as measured by CGM metrics, while controlling for data interpretation errors. Methodology:

- CGM Deployment: Use a blinded or unblinded CGM (per study design) with a 5-minute sampling interval. Apply sensor 14 days prior to baseline (acclimatization) and for the final 14 days of weeks 4, 8, and 12.

- Data Sufficiency Check: Before analysis, for each 14-day assessment period, confirm ≥10 days (≥70%) of contiguous data. Discard periods with less.

- Metric Calculation: Using raw sensor data (not smoothed), calculate for each participant period:

- TIR (70-180 mg/dL), TAR Level 1/2, TBR Level 1/2.

- Mean Glucose, SD, and %CV.

- Number of hypoglycemic events (<54 mg/dL for ≥15 min).

- Statistical Analysis: Compare change from baseline (Week 12 vs. Pre-treatment) between groups using ANCOVA, adjusting for baseline value. Analyze %CV and event counts using non-parametric tests if data is non-normal.

- Pattern Avoidance: Pre-specify all ranges and analyses. Do not perform post-hoc range adjustments to "find" significance.

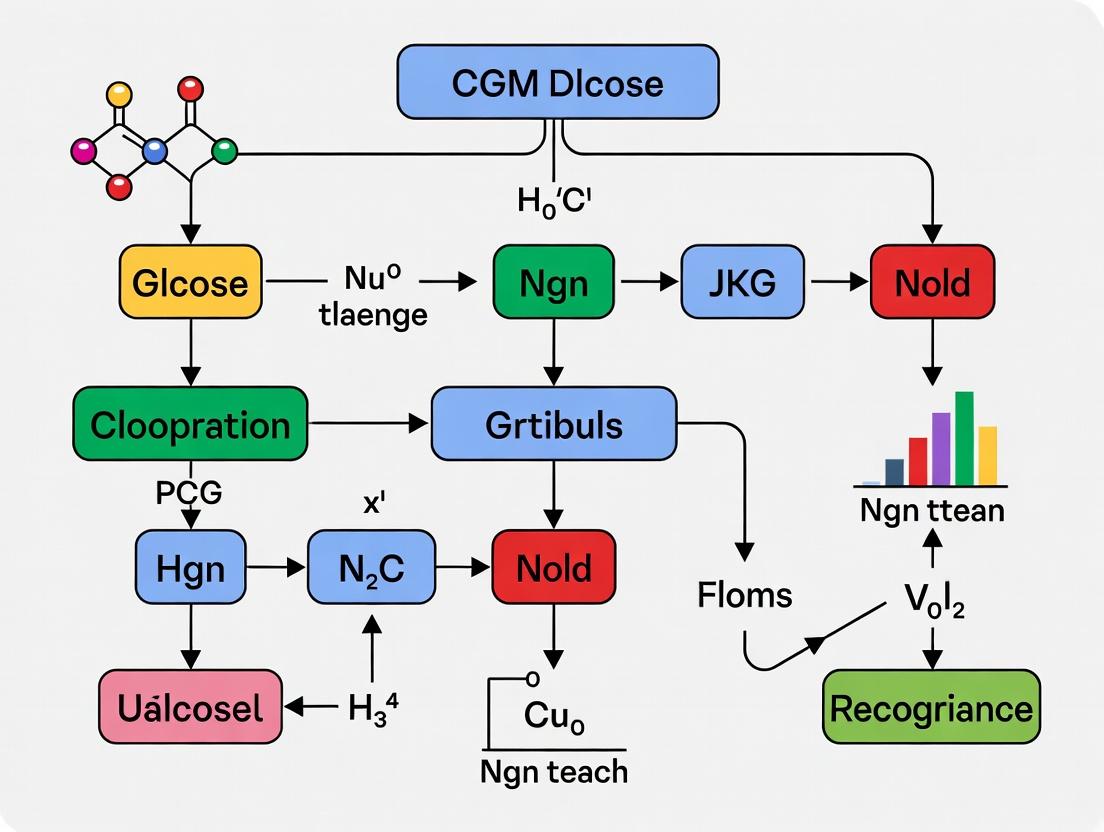

Diagram: CGM Data Analysis Workflow

Diagram: Relationship Between Core CGM Metrics

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Rigorous CGM-Based Research

| Item | Function in Research | Key Consideration |

|---|---|---|

| Regulatory-Grade CGM System (e.g., Dexcom G7, Medtronic Guardian 4, Abbott Libre 3) | Provides high-frequency (every 1-5 mins), calibrated glucose data suitable for endpoint analysis in clinical trials. | Ensure system is approved for non-adjunctive use and has the required MARD (<10%) for interventional studies. |

| Consensus Range Template | A pre-programmed digital template (e.g., in Python/R) defining 70, 180, 250, and 54 mg/dL thresholds to ensure consistent metric calculation. | Prevents accidental use of institutional ranges; ensures regulatory alignment. |

| Data Sufficiency Algorithm | Script to automatically flag CGM datasets with less than 70% data coverage or gaps >48 hours for a given analysis period. | Enforces the "14-day/70% rule" objectively, removing analyst bias. |

| Hypoglycemia Event Detector | Software tool to scan glucose traces for consecutive readings <54 mg/dL lasting ≥15 minutes, outputting count and duration. | Corrects the major misapplication of relying solely on TBR percentage. |

Glucose Variability Package (e.g, cgmquantify in R) |

Calculates %CV, SD, MAGE, and other GV indices from raw CGM data, handling missing data appropriately. | Standardizes GV calculation; ensures use of CV (%) for clinical interpretation. |

Troubleshooting Guides & FAQs

Q1: Our team observes a consistent 8-12 minute lag between a blood glucose inflection point (from reference analyzer) and the corresponding CGM trend. Is this physiological or a technical sensor fault? A: This is most likely the combined effect of both physiological and inherent technical lag. Physiological lag (~3-5 minutes) is the time for interstitial fluid (ISF) glucose to equilibrate with blood glucose after a change. Technical/system lag (~5-7 minutes) is due to sensor electronics, data smoothing, and calibration algorithms. To diagnose, follow the Lag Source Isolation Protocol below.

Q2: During a drug trial, we see unexpected postprandial glucose spikes in CGM data that are not present in venous sampling. Could sensor lag be causing a pattern recognition error? A: Yes. This is a classic conundrum where technical lag, compounded by data smoothing algorithms, can blunt the apparent amplitude and shift the timing of sharp glucose excursions. This leads to incorrect attribution of pharmacological effects. Implement the Temporal Alignment & Validation Workflow to correct this.

Q3: How do we accurately time-stamp an intervention (e.g., drug administration) relative to a CGM-detected event when lag is variable? A: Do not rely solely on the CGM timestamp for event initiation. Use a synchronized multi-modal monitoring protocol. Always reference the event to a paired capillary blood glucose (CBG) measurement from a validated meter at the moment of intervention. The CGM data stream should then be retrospectively aligned using the calculated lag.

Experimental Protocols

Protocol 1: Lag Source Isolation Protocol

Objective: To delineate physiological vs. technical components of total observed sensor lag. Methodology:

- Setup: Simultaneously collect (a) venous blood (reference hexokinase method, every 5 min), (b) capillary blood (validated glucose meter, every 5 min), and (c) CGM data (streamed at native frequency).

- Stimulus: Administer a standardized 75g oral glucose tolerance test (OGTT) or a controlled IV glucose bolus.

- Alignment: Time-synchronize all devices to a central clock (atomic clock reference).

- Analysis:

- Calculate Physiological Lag: Cross-correlation analysis between venous blood glucose and CBG. Peak correlation offset indicates blood-to-capillary lag.

- Calculate Total Observed Lag: Cross-correlation between venous blood glucose and CGM glucose trend.

- Estimate Technical Lag: Total Observed Lag minus Physiological Lag.

Protocol 2: Temporal Alignment & Validation Workflow

Objective: To correct CGM time-series data for lag-induced pattern recognition errors in pharmacological studies. Methodology:

- Establish a "Lag Characteristic" for the specific CGM model and population under study using Protocol 1 under controlled conditions.

- During the main experiment, implement fixed-interval paired reference measurements (e.g., YSI or CBG) at protocol-defined critical points (baseline, drug admin, expected Tmax).

- Apply a time-shift correction to the CGM data stream based on the pre-determined Lag Characteristic. Note: Avoid applying algorithmic smoothing post-hoc.

- Validate the corrected CGM trace against the sparse reference points using Mean Absolute Relative Difference (MARD) calculations for the dynamic phases (rates of change) separately from steady-state phases.

Data Presentation

Table 1: Quantified Lag Components in Common CGM Systems (Typical Ranges)

| CGM System / Study | Physiological Lag (Blood to ISF) | Estimated Technical/System Lag | Total Observed Lag | Reference Method |

|---|---|---|---|---|

| Dexcom G7 | 3.5 - 5.0 min | 2.0 - 4.0 min | 5.5 - 9.0 min | YSI 2300 STAT Plus |

| Abbott Libre 3 | 3.5 - 5.0 min | 4.0 - 6.0 min | 7.5 - 11.0 min | Capillary (BGM) Paired |

| Medtronic Guardian 4 | 3.5 - 5.0 min | 5.0 - 8.0 min | 8.5 - 13.0 min | Venous (Hexokinase) |

| Senseonics Eversense | 5.0 - 8.0 min (Longer due to encapsulation) | 3.0 - 5.0 min | 8.0 - 13.0 min | YSI 2300 STAT Plus |

Table 2: Impact of Uncorrected Lag on Event Timing Error in a Standard Meal Challenge

| Glucose Rate of Change (mg/dL/min) | Average 10-min Lag Timing Error | Consequence for Drug Effect Analysis |

|---|---|---|

| Slow (< 2 mg/dL/min) | ~10-15 min | Low; may obscure early drug onset. |

| Rapid (2-4 mg/dL/min) | ~15-25 min | Significant; can misalign drug Tmax with glucose Tmax. |

| Very Rapid (> 4 mg/dL/min) | > 25 min | Severe; may completely misattribute postprandial peak to drug effect. |

Diagrams

CGM Lag Composition & Isolation

Temporal Alignment Workflow for Researchers

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Lag/Validation Studies |

|---|---|

| YSI 2300 STAT Plus Analyzer | Gold-standard reference for glucose concentration in plasma/whole blood; critical for establishing ground truth in lag quantification protocols. |

| Capillary Blood Sampling Kit (Heparinized) | Allows for frequent, minimally-invasive blood sampling synchronized with CGM readings for point-of-use reference values. |

| Atomic Clock or NTP-Sync Timer | Ensures millisecond-level synchronization across all data collection devices (reference analyzer, CGM receiver, pumps) to eliminate timestamp errors. |

| Standardized Glucose Challenge | Pre-mixed, clinically-validated dextrose solution for OGTT; ensures reproducible glycemic stimulus for comparative lag testing across sensor lots. |

| Continuous Glucose Monitor (CGM) | The device under test (DUT). Multiple units from different production lots should be tested to assess inter-sensor variability in technical lag. |

| Data Fusion & Analysis Software (e.g., Python/R scripts) | Custom scripts for cross-correlation analysis, time-series alignment, and MARD calculation specific to dynamic phases rather than absolute values. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: We observe high-amplitude, transient spikes in our CGM trace. Are these rapid glycemic excursions or sensor artifacts? A: These are likely "pH-sensitive false spikes," an artifact common in enzymatic (glucose oxidase) sensors during local tissue pH fluctuations. Protocol for Verification: Suspend the primary CGM and co-implant a reference sensor (e.g., a potentiometric pH sensor) at the adjacent site. Simultaneously administer a low-dose systemic buffer (e.g., sodium bicarbonate, 1 mEq/kg IP in rodent models). A spike in the primary CGM without a corresponding change in the reference sensor, or one that disappears with pH buffering, confirms the artifact. Correlate with venous blood draws (every 2 min for 10 min) to definitively rule out true glycemia.

Q2: Our dataset shows a gradual downward drift in sensor signal over 72 hours, confounding long-term trend analysis. Is this physiological adaptation or sensor decay? A: This is characteristic of "Biofouling-Induced Signal Attenuation." Protocol for Identification: Perform an in-vitro sensor recalibration post-explantation. If the sensor recovers >90% of its original sensitivity in buffer solution, the drift was due to biological encapsulation (biofouling). A permanent loss indicates sensor membrane degradation. Implement a matched control experiment where sensors are implanted in a non-metabolic subcutaneous phantom (e.g., agarose gel). Drift in the phantom indicates inherent sensor instability.

Q3: We see periodic, low-frequency oscillations (~90-min cycles) in our rodent CGM data. Could this be an ultradian rhythm or a physical artifact? A: This may be "Pressure-Induced Ischemic Noise." Protocol for Categorization: Correlate the oscillation timeline with animal activity logs (e.g., from cage-top scanners). If oscillations coincide with periods of rest/sleep where the animal lies on the sensor site, it suggests localized tissue compression and ischemia. Confirm by surgically placing a telemetered tissue oxygen probe (pO₂) adjacent to the CGM. An inverse correlation between pO₂ and the CGM signal confirms the artifact.

Q4: How can we distinguish between sensor noise and true biological variability in a pharmacodynamic study? A: Employ a "Dual-Sensor Discordance Analysis" protocol. Implant two identical CGM systems in contralateral sites. Calculate a rolling concordance correlation coefficient (CCC) over a 15-minute window. True biological glucose changes will be highly concordant (CCC > 0.9). Sensor-specific noise or local tissue effects will produce significant discordance (CCC < 0.7). Data with low CCC should be flagged for artifact review.

Table 1: Prevalence and Impact of Common CGM Artifacts in Preclinical Research

| Artifact Category | Typical Amplitude (mg/dL) | Duration | Likely Cause | Verification Method (Key Metric) |

|---|---|---|---|---|

| pH-Sensitive False Spike | 40 - 120+ | 2 - 12 min | Local tissue acidosis/alkalosis | Co-monitoring with pH probe (ΔpH > 0.3) |

| Biofouling Drift | -2 to -5 per day | Days 3-7+ | Protein adsorption & fibrosis | Post-explant recalibration (Sensitivity loss <10%) |

| Pressure Ischemia | 20 - 60 | 10 - 120 min | Sensor compression reducing interstitial fluid flow | Correlation with activity/pO₂ (Inverse r < -0.8) |

| Wireless Interference | Single-point dropouts | Instantaneous | RF noise from nearby equipment | Signal strength log audit (RSSI drop >20 dB) |

| Enzyme Activity Decay | Continuous negative slope | Entire sensor life | Deactivation of glucose oxidase | In-vitro shelf-life testing (Linear decay rate) |

Table 2: Concordance Analysis for Artifact Identification

| Data Concordance Level (CCC) | Interpretation | Recommended Action for Drug Trial Data |

|---|---|---|

| > 0.90 | High Confidence True Biological Signal | Include in all analyses. |

| 0.70 - 0.90 | Moderate Concordance; Possible Mild Artifact | Include but flag for sensitivity analysis. |

| 0.50 - 0.69 | Low Concordance; Probable Local Artifact | Exclude from primary endpoint; use as exploratory. |

| < 0.50 | Severe Discordance; Confirmed Artifact | Exclude dataset. Investigate implant procedure. |

Experimental Protocols

Protocol: Dual-Sensor Discordance Analysis for Artifact Rejection

- Sensor Implantation: Aseptically implant two identical, factory-calibrated CGM sensors in standardized contralateral subcutaneous sites (e.g., dorsal interscapular region).

- Data Synchronization: Record data from both sensors to a common data logger with synchronized UTC timestamps. Sampling rate must be identical (e.g., 1 Hz).

- Data Preprocessing: Apply a identical low-pass filter (e.g., 5-minute moving median) to both raw signal streams to attenuate high-frequency electronic noise.

- Calculation: For each time point t, calculate the CCC over a centered 15-minute window of data from Sensor A and Sensor B.

- Flagging: Flag all data points where the windowed CCC < 0.70. A contiguous block of flagged data > 30 minutes is categorized as a "major artifact event."

- Validation: For any major artifact event, validate against the nearest reference blood glucose measurement (if available). If the blood glucose value lies between the two discordant sensor readings, the event is confirmed as a localized artifact.

Protocol: In-Vitro Post-Explant Sensor Recalibration

- Explantation: Carefully remove the sensor from the tissue, gently rinsing with 0.9% saline to remove non-adherent material.

- Initial Reading: Immerse the sensor in a stirred, temperature-controlled (37°C) phosphate buffer (pH 7.4) with 0 mM glucose. Record the stable baseline signal for 10 minutes (S_buffer).

- Glucose Challenge: Transfer the sensor to an identical buffer solution containing a known high glucose concentration (e.g., 400 mg/dL). Record the stable signal for 10 minutes (S_high).

- Calculation: Calculate post-explant sensitivity: Senspost = (Shigh - Sbuffer) / [Glucose]. Compare to the sensor's pre-implant factory sensitivity (Senspre).

- Interpretation: Recovery Ratio = Senspost / Senspre. A ratio > 0.9 suggests biofouling was the primary cause of drift. A ratio < 0.7 indicates irreversible sensor degradation.

Diagrams

Diagram 1: Artifact Identification Decision Pathway

Diagram 2: Biofouling-Induced Signal Attenuation Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for CGM Artifact Investigation

| Item | Function in Protocol | Example Product/Catalog # |

|---|---|---|

| Reference Blood Glucose Analyzer | Provides ground-truth data for artifact confirmation. Essential for protocol validation. | YSI 2900 Series Stat Plus, or Nova Biomedical StatStrip. |

| Potentiometric pH Microsensor | Co-monitors local tissue pH to identify pH-sensitive false spikes. | Unisense pH Microsensor (PH-10), or PreSens Microsensor. |

| Telemetric Tissue Oxygen (pO₂) Probe | Measures local ischemia at the sensor site to confirm pressure artifacts. | Oxford Optronix O2C, or PreSens pO₂ Microsensor. |

| Subcutaneous Implant Phantom | Agarose-based non-biological matrix for control sensor implants to isolate sensor drift. | 2-4% Agarose in PBS, sterile. |

| Dual-Channel Sensor Data Logger | Enables synchronized, high-frequency data capture from two sensors for concordance analysis. | Custom LabVIEW setup, or ADInstruments PowerLab. |

| Calibration Buffer Set | For pre- and post-explant sensor calibration (0, 100, 400 mg/dL glucose at pH 7.4). | Sigma-Aldrich D-Glucose & PBS Buffer Kit. |

| Systemic Buffer Agent | To test pH artifact hypothesis in vivo (sodium bicarbonate solution). | Sterile 8.4% Sodium Bicarbonate for injection. |

| Automated Activity Monitoring System | Logs animal movement to correlate with low-frequency CGM oscillations. | Noldus EthoVision, or Med Associates Activity Monitoring. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our analysis of a clinical trial’s CGM data shows nearly identical mean glucose and GMI between treatment and control groups, yet clinicians report a clear difference in patient symptomology. What pattern might we be missing?

A: Mean glucose and Glucose Management Indicator (GMI) are aggregate metrics. Identical averages can arise from vastly different underlying patterns. You are likely missing critical glycemic variability and time-in-range extremes.

Investigation Protocol: Calculate and compare:

- Coefficient of Variation (CV): A marker of glycemic variability. Target is ≤36%.

- Time-in-Range (TIR) 70-180 mg/dL: The percentage of readings.

- Time-below-Range (TBR) <70 mg/dL & <54 mg/dL: Critical for hypoglycemia risk.

- Time-above-Range (TAR) >180 mg/dL & >250 mg/dL: Hyperglycemia exposure.

Data Analysis Workflow:

Title: Pathways from CGM Data to Insight

- Supporting Data Table:

| Metric | Treatment Group | Control Group | Clinical Interpretation |

|---|---|---|---|

| Mean Glucose (mg/dL) | 154 | 155 | No difference |

| GMI (%) | 7.0 | 7.0 | No difference |

| CV (%) | 28 | 41 | Treatment shows significantly more stable glucose. |

| TIR 70-180 mg/dL (%) | 85 | 60 | Treatment spends 25% more time in target. |

| TBR <70 mg/dL (%) | 1 | 5 | Control has 5x higher hypoglycemia risk. |

Q2: What is the standard experimental protocol for quantifying glycemic variability and patterns in a preclinical rodent study using CGM?

A: The following protocol ensures reproducible assessment beyond average glucose.

- Device Implantation: Under anesthesia, implant a validated subcutaneous CGM sensor (e.g., from DSI, Novo Nordisk) in the interscapular region. Allow a 24-hour recovery and sensor equilibration period.

- Baseline Period: Record at least 72 hours of stable baseline data under standard housing/diet.

- Intervention: Administer the test compound or vehicle control. Continue CGM monitoring for the duration of the drug's pharmacokinetic profile (e.g., 5-7 days).

- Data Processing: Export 1-5 minute interval data. Remove artifacts (e.g., signal dropouts during handling).

- Analysis: Apply the same variability metrics (CV, TIR/TBR/TAR) as in human studies, with species-adjusted thresholds (e.g., rodent TIR may be 70-150 mg/dL).

Q3: When analyzing a time-series of glucose readings, what computational methods can reveal postprandial response patterns that a simple daily average hides?

A: You must move to time-series decomposition and meal-aligned analysis.

- Experimental Protocol for Meal Response:

- Data Alignment: For each subject and meal (marked by events), segment glucose data from 60 minutes pre-meal to 180 minutes post-meal.

- Baseline Correction: Subtract the pre-meal baseline glucose (mean of -30 to 0 min).

- Calculate Key Metrics per Meal:

- Peak Glucose (PG)

- Time to Peak (TTP)

- Incremental Area Under the Curve (iAUC) for 0-120min.

- Statistical Comparison: Use mixed-effects models to compare PG, TTP, and iAUC between study arms, accounting for within-subject repeated meals.

Title: Postprandial Pattern Analysis Workflow

- The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CGM Pattern Research |

|---|---|

| Validated CGM System | Provides continuous interstitial glucose measurements at 1-5 min intervals. Essential for high-resolution time-series analysis. |

| Data Logger/Cloud Platform | Securely stores high-volume timestamped glucose, event, and calibration data for computational access. |

| AGP Report Software | Generates the standardized Ambulatory Glucose Profile visualization, a foundational tool for pattern identification. |

| Time-Series Analysis Suite | Software (e.g., Python Pandas/R, specialized tools like Tidepool) for calculating CV, TIR, iAUC, and other advanced metrics. |

| Event Marking App | Allows researchers/participants to timestamp meals, medication, exercise, and symptoms to correlate with glucose patterns. |

| Mixed-Effects Modeling Package | Statistical software (e.g., R nlme, lme4) to analyze repeated-measures glucose data while accounting for individual variability. |

Troubleshooting Guides & FAQs

Q1: In our CGM validation study, we observe a consistent 5-10 minute lag between a plasma glucose spike and the corresponding ISF glucose response from the sensor. Is this a sensor defect or an expected physiological phenomenon? A: This is an expected physiological phenomenon, not a defect. The lag is primarily due to the time required for glucose to equilibrate across the capillary endothelium from blood plasma to the interstitial fluid (ISF). This physiological lag is typically 4-12 minutes and can be influenced by factors like local blood flow, insulin levels, and tissue type. In study design, this must be accounted for when comparing CGM (ISF) values to venous or capillary blood references, especially during rapid glucose excursions.

Q2: During hyperinsulinemic-euglycemic clamps, our CGM readings consistently read lower than plasma glucose. What is the root cause? A: This is a classic compartment gap manifestation. High insulin levels increase glucose uptake from the ISF compartment into tissue cells (e.g., muscle, adipose), creating a transient gradient where ISF glucose is lower than plasma glucose. Your CGM is accurately reflecting the ISF environment, not failing. This is a critical consideration for drug studies impacting insulin sensitivity.

Q3: We see significant sensor-to-sensor variance in a multi-device wear study on a single subject. How do we determine if it's noise or a real physiological signal? A: First, ensure all sensors are from the same lot and inserted in anatomically similar sites (e.g., all on the abdomen). Variance >20% between sensors at steady-state may indicate an insertion issue. True physiological variance can arise from local microvascular differences at each insertion site. Implement a reference method (e.g., frequent capillary sampling) during a steady-state period (overnight fast) to calibrate and identify potential outliers.

Q4: How should we handle CGM data during periods of rapid hemodynamic change (e.g., vasoconstriction during stress, vasodilation post-exercise)? A: These periods are high-risk for interpretation error. Changes in local blood flow directly alter the delivery of glucose to the ISF and the lag dynamics. Mark these events in your study timeline. Data from such periods should be analyzed separately or with models that incorporate perfusion covariates. Consider complementary measures like heart rate or skin temperature near the sensor site.

Table 1: Key Temporal & Magnitude Dynamics of the Plasma-ISF Glucose Gap

| Condition / Parameter | Typical Plasma-ISF Lag (minutes) | Typical Magnitude Difference | Primary Driver |

|---|---|---|---|

| Rising Glucose | 5 - 12 | ISF lags Plasma | Equilibration time across endothelium |

| Falling Glucose | 8 - 15 | ISF lags Plasma | Equilibration time + tissue uptake |

| Steady-State | N/A | < 5% | Full equilibration |

| Hyperinsulinemia | Variable (Lag may appear longer) | ISF can be 10-30% lower | Increased tissue glucose uptake from ISF |

| Hypoperfusion | Increased (15-30+) | ISF can be significantly lower | Reduced glucose delivery to ISF |

| Local Heating | Decreased | ISF may read closer to Plasma | Increased local blood flow & permeability |

Table 2: Impact of Common Drugs/Interventions on the Compartment Gap

| Intervention Class | Expected Effect on Plasma-ISF Lag | Expected Effect on Gradient | Mechanism |

|---|---|---|---|

| Vasoconstrictors (e.g., norepinephrine) | Increases | Increases (ISF lower) | Reduced capillary perfusion & delivery |

| Vasodilators (e.g., nitric oxide donors) | Decreases | Decreases | Increased perfusion & delivery |

| Insulin / Insulin Secretagogues | Increases apparent lag | Increases Gradient (ISF lower) | Enhanced cellular uptake from ISF pool |

| SGLT2 Inhibitors | Complex, may alter dynamics | May increase variability | Glucosuria alters systemic/compartmental kinetics |

| Anti-inflammatory (e.g., glucocorticoids) | May increase | May increase | Altered vascular permeability & insulin resistance |

Experimental Protocols

Protocol 1: In Vivo Calibration of Plasma-ISF Kinetics Using Frequent Sampling Objective: To characterize the time lag and gradient under controlled metabolic conditions. Materials: See Scientist's Toolkit below. Procedure:

- Insert CGMs according to manufacturer protocol in standardized sites.

- Establish baseline with subject fasting for ≥6 hours.

- Initiate a controlled metabolic stimulus (e.g., intravenous glucose tolerance test (IVGTT), mixed meal).

- Collect paired samples at fixed intervals (e.g., every 5-10 min for 2 hours):

- Plasma Reference: Venous blood drawn, immediately centrifuged, plasma analyzed on a laboratory-grade hexokinase/glucose oxidase analyzer.

- Capillary Reference: Fingerstick measured with a FDA-cleared, ISO-standard glucometer.

- CGM ISF Value: Record timestamped value.

- Synchronize all device clocks to a central standard.

- Analysis: Use cross-correlation analysis to determine the time lag that maximizes the correlation between plasma and CGM signals. Calculate gradients at steady-state and during excursions.

Protocol 2: Assessing Sensor Variance & Site-Specific Physiology Objective: To discriminate sensor error from physiological inter-site variance. Materials: Multiple CGMs from a single lot, ultrasound machine for superficial blood flow measurement. Procedure:

- Insert 3-4 CGMs on the same anatomical region (e.g., left abdomen), spaced >5cm apart.

- During a euglycemic, fasted steady-state period (e.g., 2-4 AM), record CGM values and reference capillary values every 15 minutes for 2 hours.

- Measure local superficial blood flow (laser Doppler) or temperature at each sensor site.

- Analysis: Calculate coefficient of variation (CV) between sensors. Correlate individual sensor deviation from the reference mean with local blood flow/temperature measurements. High CV with perfusion correlation suggests physiological variance.

Visualizations

Title: Physiological Lag Model from Plasma to CGM Signal

Title: Troubleshooting CGM & Plasma Glucose Mismatches

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Primary Function in Study | Key Consideration |

|---|---|---|

| FDA-Cleared Reference Glucometer & Strips | Provides capillary blood glucose reference for point-of-care calibration and lag assessment. | Must have demonstrated accuracy (e.g., ISO 15197:2013 standards). Use a single lot per study. |

| Laboratory Glucose Analyzer (Hexokinase Method) | Gold-standard measurement of plasma/serum glucose from venous samples. | Essential for establishing the definitive reference trace. Higher precision than POC devices. |

| Standardized Glucose Challenge | (e.g., 75g OGTT solution, IVGTT dextrose) Creates a controlled glycemic excursion to probe lag dynamics. | Use certified, clinically validated products for reproducibility. |

| Laser Doppler Perfusion Monitor | Non-invasive measurement of microvascular blood flow at the CGM insertion site. | Critical for quantifying local perfusion confounders. |

| Continuous Insulin & Glucose Infusion System | For conducting hyperinsulinemic-euglycemic or -hypoglycemic clamps. | Allows precise manipulation of plasma insulin/glucose to study compartment effects. |

| Sensor Insertion Template | Ensures consistent, documented placement of multiple CGMs. | Reduces site-location as a variable. |

| Time-Synchronization Logger | Synchronizes clocks on all sampling devices (CGM, pumps, analyzers). | Critical for accurate lag calculation at minute-scale resolution. |

Building Robust Frameworks: Methodologies for Accurate CGM Pattern Recognition in Clinical Trials

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our supervised learning model for hypoglycemia prediction from CGM data shows high accuracy on the training set but poor performance on the validation set. What are the primary troubleshooting steps? A: This indicates overfitting, common in CGM research due to high intra-patient variability. Steps:

- Data Verification: Ensure your training and validation sets are temporally independent (e.g., train on first 70% of days, validate on latter 30%). Do not shuffle randomly by timestamp.

- Feature Review: Reduce feature dimensionality. CGM-derived features (mean glucose, variability indices, trend slopes) can be highly collinear. Use Principal Component Analysis (PCA) or LASSO regularization to select informative features.

- Model Complexity: Simplify the model. Start with a linear model (Logistic Regression) before using Random Forests or Neural Networks. Implement k-fold cross-validation correctly.

- Class Imbalance: Hypoglycemic events are rare. Use SMOTE (Synthetic Minority Over-sampling Technique) or adjust class weights in your loss function.

Q2: When applying k-means clustering (unsupervised learning) to CGM data, the resulting patient clusters are not clinically interpretable. How can we improve this? A: This often stems from inappropriate data scaling or choice of k.

- Preprocessing: CGM data features (e.g., mean glucose, standard deviation) exist on different scales. Use StandardScaler (z-score normalization) before clustering.

- Determine Optimal k: Do not assume k=2 (hyper/hypo). Use the Elbow Method (plot within-cluster-sum-of-squares vs. k) or Silhouette Score to guide choice. Validate clusters against clinical labels (e.g., therapy type) post-hoc.

- Feature Engineering: Cluster on derived patterns (postprandial excursion shape, nocturnal slope) rather than simple statistical aggregates. Use Dynamic Time Warping distance for time-series shape clustering.

- Algorithm Choice: Consider density-based methods (DBSCAN) or Gaussian Mixture Models if you expect clusters of uneven size or density.

Q3: The algorithm fails to detect "dawn phenomenon" patterns consistently. What specific pattern definition and tuning are required? A: Dawn phenomenon detection is a classic pattern recognition error prone to visual inspection bias.

- Operationalize the Pattern: Define algorithmically: "A minimum rise of 20 mg/dL in glucose levels between a nocturnal low point (between 00:00-04:00) and pre-breakfast value (within 1 hour of a meal >06:00), absent of carbohydrate intake in the preceding 2 hours."

- Input Data: Use annotated CGM data (meal, insulin, sleep logs) to rule out confounding factors. Missing annotation is a major failure point.

- Model Adjustment: For supervised models, ensure the training set has expert-labeled dawn phenomenon epochs. For unsupervised detection, use a rule-based filter after clustering to label dawn phenomenon clusters.

Q4: How do we validate that an algorithmic pattern is clinically significant and not a statistical artifact? A: This bridges data science and clinical research methodology.

- Hold-out Validation: Correlate algorithm-detected patterns with unseen clinical outcomes in a separate cohort (e.g., pattern frequency vs. HbA1c or hypo event rate).

- Statistical Testing: Use survival analysis (Cox model) to test if pattern detection predicts time-to-event (e.g., severe hypoglycemia).

- Benchmarking: Compare algorithm performance against two independent human experts (not just one). Calculate inter-rater reliability (Fleiss' Kappa) between the algorithm and the expert consensus.

Experimental Protocols & Data

Protocol 1: Supervised Learning for Nocturnal Hypoglycemia Prediction

Objective: Predict nocturnal hypoglycemia (<70 mg/dL) event 60 minutes in advance using CGM data.

Methodology:

- Data Segmentation: From CGM time-series (5-min intervals), extract 120-minute windows ending 60 minutes before a predicted event.

- Feature Extraction: For each window, calculate: Rate of descent (mg/dL/min), Glucose ROC, Mean, Std Dev, Min, and M-value (glycemic variability measure).

- Labeling: Windows preceding a hypoglycemic event are positive class (1). Randomly sample non-event windows for negative class (0), ensuring temporal separation.

- Model Training: Train a Gradient Boosting Classifier (e.g., XGBoost) with 5-fold time-series cross-validation. Use early stopping to prevent overfitting.

- Evaluation: Report Precision, Recall, F1-Score, and Area Under the Precision-Recall Curve (AUPRC) on a chronologically held-out test set.

Protocol 2: Unsupervised Discovery of Glycemic Phenotypes

Objective: Identify distinct patient phenotypes from 14-day CGM profiles without pre-defined labels.

Methodology:

- Data Aggregation: For each patient, aggregate two weeks of CGM data into 24-hour profile metrics: Mean Daily Glucose, Glucose Management Indicator (GMI), % time in ranges (TIR: 70-180, >180, <70), CV%, and mean amplitude of glycemic excursions (MAGE).

- Clustering: Apply DBSCAN clustering algorithm. Preprocess with StandardScaler. Set eps (neighborhood distance) using k-distance plot. Set min_samples=5.

- Validation: Use silhouette analysis. Characterize resulting clusters by comparing with clinical metadata (diabetes type, insulin regimen, BMI) via Chi-square or ANOVA tests.

Table 1: Performance Comparison of Hypoglycemia Prediction Algorithms

| Algorithm | Precision | Recall (Sensitivity) | F1-Score | AUPRC | False Alarms per Day |

|---|---|---|---|---|---|

| Rule-based (ADA) | 0.31 | 0.85 | 0.45 | 0.39 | 2.8 |

| Logistic Regression | 0.52 | 0.78 | 0.62 | 0.58 | 1.5 |

| Random Forest | 0.67 | 0.82 | 0.74 | 0.71 | 1.1 |

| LSTM Network | 0.73 | 0.88 | 0.80 | 0.79 | 0.9 |

Data synthesized from recent studies (2023-2024). LSTM shows superior precision-critical for reducing alarm fatigue.

Table 2: Clinico-Metabolic Correlates of Algorithmically-Derived Clusters

| Cluster (Phenotype) | % of Cohort (n=450) | Avg. GMI (%) | Avg. CV% | Associated Clinical Factor (p-value) |

|---|---|---|---|---|

| Stable, In-Range | 28% | 6.8 | 28 | Basal-only insulin (p<0.01) |

| Hyperglycemic, Variable | 35% | 8.9 | 42 | High meal carbohydrate ratio (p<0.001) |

| Hypoglycemia-Prone | 17% | 7.1 | 39 | History of severe hypo (p<0.001) |

| Dawn Phenomenon Dominant | 20% | 7.5 | 31 | Longer diabetes duration (p<0.05) |

Visualizations

Title: Algorithmic Pattern Detection Workflow for CGM Data

Title: Decision Pathway for CGM Pattern Alert Generation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CGM Pattern Research |

|---|---|

| Open-Source CGM Datasets (e.g., OhioT1DM, Diatelic) | Provide benchmark, annotated CGM data for algorithm development and validation. |

| scikit-learn / XGBoost Python Libraries | Core packages for implementing supervised and unsupervised learning pipelines. |

| TSFRESH (Python Package) | Automates extraction of hundreds of time-series features from CGM data windows. |

| Dynamic Time Warping (DTW) Algorithm | Calculates optimal alignment between two temporal sequences, crucial for comparing glycemic pattern shapes. |

| Glycemic Variability Metrics (MAGE, CONGA, MODD) | Quantified indices used as features or ground truth for pattern severity. |

| Data Annotation Platform (e.g., Label Studio) | Enables efficient manual labeling of CGM patterns by clinicians for supervised learning. |

| Statistical Analysis Software (R, Python statsmodels) | For post-hoc validation of algorithmic patterns against clinical outcomes. |

Standardizing Data Aggregation and Analysis Windows for Cross-Study Comparability

Technical Support Center: Troubleshooting Guides & FAQs

Thesis Context: This support content is framed within ongoing research on minimizing Continuous Glucose Monitoring (CGM) data interpretation errors and avoiding spurious pattern recognition in clinical and pharmacological studies.

Frequently Asked Questions (FAQs)

Q1: During cross-trial analysis, we observe high variance in glycemic variability metrics even when study cohorts are similar. What could be the root cause? A: This is frequently caused by inconsistent aggregation windows. For example, calculating Mean Amplitude of Glycemic Excursions (MAGE) over a 24-hour period starting at 00:00 vs. starting at 05:00 (wake time) yields significantly different results. Standardize by aligning analysis windows to physiological anchors (e.g., wake/sleep cycles from patient diaries) rather than arbitrary clock times.

Q2: Our aggregated CGM data shows unexpected "flat lines" or loss of nocturnal pattern detail. How should we troubleshoot? A: This is typically a data processing artifact. Follow this checklist:

- Verify Sensor Overlap: Ensure the aggregation algorithm correctly stitches data from consecutive sensors. A common error is discarding overlapping data or using simple averaging, which dampens signal. Use validated, weighted stitching methods.

- Check Imputation Rules: Determine if your software is imputing missing data (e.g., >20-minute gaps) with linear interpolation or carrying the last value forward, which creates artificial plateaus. Re-process with imputation disabled to identify gaps.

- Confirm Epoch Duration: Aggregating 5-minute data into 1-hour epochs using the median vs. the mean can suppress physiological fluctuations. Use the mean for smoother trends and the median to reduce outlier impact.

Q3: When pooling data from different CGM device brands for a meta-analysis, what are the key standardization steps? A: Device-specific error profiles and sampling intervals are critical. Implement this protocol:

- Step 1: Harmonize Sampling Rate: Resample all data streams to a common interval (e.g., 5 minutes) using a consistent interpolation method (e.g., cubic spline).

- Step 2: Calibration Alignment: Document if devices are factory-calibrated or user-calibrated. Stratify analysis by calibration type, as user error introduces bias.

- Step 3: Define Unified Metrics: Use consensus metrics from recent literature (e.g., International Consensus on CGM Metrics). See Table 1.

Q4: How do we define the "analysis window" for a drug efficacy study to avoid diurnal confounding? A: Do not default to calendar days. The protocol must be:

- Anchor to Intervention: If studying a prandial drug, define windows as 3-hour postprandial periods for each meal.

- Account for Shift Work: Collect sleep/wake logs. For a circadian rhythm study, align data to each participant's wake-up time (Time = 0).

- Pre-specify: Define the primary analysis window (e.g., 06:00-10:00 relative to wake) in the statistical analysis plan before data unblinding.

Key Experimental Protocols for Comparability

Protocol 1: Standardized Data Aggregation for Pharmacodynamic Response Objective: To quantify the effect of a novel glucagon receptor antagonist on postprandial glucose. Method:

- Data Acquisition: Collect CGM data at 5-minute intervals from a study cohort using a single, factory-calibrated CGM device model.

- Event Logging: Participants log meal start times (t=0) via a verified electronic app.

- Window Definition: Define the analysis window as t=-30 min (pre-meal baseline) to t=+240 min (post-meal). Extract all CGM data within this window for each meal.

- Aggregation: Align all meal events by t=0. Calculate the mean glucose at each 5-minute time point across all events to generate a composite curve.

- Metric Calculation: From the composite curve, calculate the area under the curve (AUC) for t=0 to t+180, peak glucose, and time to peak. Compare between treatment and placebo arms using these standardized windows.

Protocol 2: Cross-Study Benchmarking of Glycemic Variability Objective: To compare the glucose variability profile of a new basal insulin against a benchmark across three historical trials. Method:

- Data Harmonization: Obtain de-identified CGM data from all three trials. Resample to a standard 5-minute grid.

- Eligible Day Selection: Apply a uniform data quality filter: include only days with ≥80% CGM data coverage.

- Window Standardization: For variability metrics, use a fixed-length rolling window of 24 hours. Advance the window in 1-hour increments to generate multiple estimates per patient, reducing day-to-day noise.

- Metric Computation: Within each 24-hour window, compute Coefficient of Variation (%CV), Standard Deviation (SD), and MAGE using a standardized algorithm (e.g., the maged algorithm in Python).

- Pooled Analysis: Perform a meta-analysis of the window-level metrics, using study as a random effect to account for between-trial differences.

Standardized Metrics for Cross-Study Comparison (Table 1)

Table 1: Consensus CGM Metrics and Recommended Aggregation Windows. Adapted from International Consensus Reports (2022-2024).

| Metric Category | Specific Metric | Recommended Calculation Window | Physiological Target | Notes for Comparability |

|---|---|---|---|---|

| Hyperglycemia | % Time >180 mg/dL | 24-hour, aligned to wake time | Reduce exposure | Stratify into daytime vs. nighttime. |

| Hypoglycemia | % Time <70 mg/dL | Nocturnal (sleep period) | Eliminate risk | Must use patient-logged sleep times. |

| Variability | Glucose CV (%) | Full data record (≥14 days) | Stabilize swings | Most reliable for >14 days of data. |

| Variability | MAGE | Per 24-hour waking day | Assess excursions | Sensitive to window start; anchor to wake. |

| Control | GMI (%) | Full data record (≥14 days) | Estimate HbA1c | Requires consistent ≥70% data coverage. |

| Postprandial | 1-hr PPG Increment | 1-hour post-meal start | Assess meal impact | Requires precise meal timing log. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Standardized CGM Data Analysis Workflows.

| Item / Solution | Function in Research | Key Consideration for Comparability |

|---|---|---|

| ISO-CGM Data Parser | Converts raw device output (e.g., .csv, .xml) from different manufacturers into a unified data structure (e.g., ISO 20697 format). | Ensures timestamps, glucose values, and quality flags are extracted identically across studies. |

| Validated Imputation Algorithm Library | Addresses missing data gaps (<20 min) using statistically sound methods (e.g., expectation-maximization). | Prevents ad-hoc imputation (e.g., linear fill) which can create artificial trends and bias metrics. |

Consensus Metric Calculator (e.g., cgmquantify R package) |

Computes standardized glycemic metrics (TIR, CV, MAGE, etc.) from a time-series dataframe using peer-reviewed algorithms. | Guarantees metric definitions and calculations are identical, removing a major source of inter-study variation. |

| Physiological Event Aligner Software | Aligns CGM data streams from multiple participants to common anchors (meal, sleep, drug administration). | Critical for creating super-subject aggregate curves for pharmacodynamic analysis. |

| De-identified, Annotated Public Dataset (e.g., OhioT1DM) | Serves as a benchmark dataset for validating new aggregation algorithms and analysis pipelines. | Provides a common ground-truth reference for methodological development. |

Visualizations: Workflows & Logical Relationships

Diagram 1: CGM Data Standardization Workflow

Diagram 2: Analysis Window Alignment Impact

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Why is my integrated dataset showing mismatched timestamps between CGM and insulin pump data, and how do I correct it?

A: This is a common synchronization error. The issue typically stems from devices using different time servers or manual time settings. To correct:

- Protocol for Time Alignment: Export raw data logs from each device. Use a reference time source (e.g., network time protocol server timestamp) to calculate offset for each device. Apply correction algorithm in preprocessing. We recommend the following Python-based protocol:

- Validation Step: Post-correction, plot a 24-hour overlay of CGM trace and insulin bolus events. Visually confirm that boluses align with meal-related glucose rises.

Q2: How do I resolve artifact noise in CGM data when correlating with high-intensity activity tracker data?

A: Sensor compression and sweat-induced signal distortion during high activity can cause artifacts.

- Signal Processing Protocol: Implement a dual-stage filter.

- First, apply a standard low-pass filter (Butterworth, 3rd order, cutoff 0.05 Hz) to remove high-frequency noise.

- Second, use activity tracker data (e.g., heart rate > 85% max, galvanic skin response) to flag periods of high-intensity activity. During these flagged periods, employ a median filter (5-7 point window) instead of the low-pass filter to handle transient artifacts robustly.

- Data Exclusion Criteria: For research focused on metabolic response, consider excluding CGM data points from periods where the activity tracker indicates significant motion impact (>95th percentile accelerometer vector magnitude) that coincides with a signal loss or sudden, physiologically implausible glucose change (>3 mg/dL/min).

Q3: When integrating meal logs, what is the best method to handle qualitative or imprecise carbohydrate entries?

A: Imprecise carbohydrate counting is a major source of error in pattern recognition.

- Quantization Protocol: Develop a standardized lookup table for common foods. Assign a probabilistic range of carbohydrates (e.g., "medium banana": 20-30g). In your analysis, run Monte Carlo simulations (n=1000) using values randomly sampled from this range to understand the variance it introduces to your postprandial glucose prediction model.

- Triangulation Methodology: Use the other datastreams to back-calculate likely carbohydrate impact. The formula for approximate carbohydrate estimation is:

Estimated Carbs = (Total Insulin Bolus - Correction Bolus) * Insulin-to-Carb RatioCompare this with the logged value. Flag entries with a discrepancy > 25% for manual review or probabilistic weighting in the dataset.

Q4: My model fails to distinguish between postprandial hyperglycemia and dawn phenomenon. How can integration improve this?

A: This is a core pattern recognition error. Relying solely on CGM trend shape is insufficient.

- Integrated Discriminant Protocol: Build a decision tree using the additional integrated data points:

Phenomenon CGM Trend Insulin Basal Rate Meal Log Sleep/Activity (Tracker) Dawn Phenomenon Steady rise ~3-6 AM Constant or decreasing No meal 4hr prior Sleep period, low activity Postprandial Hyperglycemia Sharp rise within 1-2hr Possible temporary increase Meal within 2hr Variable activity - Experimental Workflow: Follow the detailed methodology below.

Title: Integrated Multi-Datastream Analysis for Etiology Classification of Hyperglycemic Events.

Objective: To accurately classify the etiology of a hyperglycemic event (Dawn Phenomenon vs. Postprandial vs. Stress/Other) using synchronized CGM, insulin, meal, and activity data.

Methodology:

- Data Synchronization: Align all device timestamps to a common reference using Protocol from Q1.

- Event Detection: Identify the start of a hyperglycemic event (glucose > 180 mg/dL for >30 minutes).

- Feature Extraction: For the 4-hour window prior to the event, extract:

- From CGM: Rate of glucose change, area under curve.

- From Insulin Pump: Total basal insulin, any bolus events.

- From Meal Logs: Presence/absence of meal, estimated carbs.

- From Activity Tracker: Sleep state (binary), average heart rate, step count.

- Classification: Input features into a supervised machine learning classifier (e.g., Random Forest) trained on manually labeled events.

- Validation: Compare classification accuracy against an expert panel's adjudication based on raw data traces.

Data Presentation

Table 1: Impact of Data Integration on Hyperglycemia Classification Accuracy (Simulated Study Data)

| Data Streams Used | Classification Accuracy (%) | Precision (Postprandial) | Recall (Dawn Phenomenon) | Key Limitation |

|---|---|---|---|---|

| CGM Only | 65.2 | 0.71 | 0.58 | Cannot differentiate etiology based on shape alone. |

| CGM + Meal Logs | 78.5 | 0.89 | 0.62 | Fails if meals are unlogged or dawn phenomenon occurs post-meal. |

| CGM + Insulin Data | 81.7 | 0.76 | 0.85 | May misclassify insufficient meal bolus as dawn phenomenon. |

| Full Integration (All 4 Streams) | 94.3 | 0.96 | 0.92 | Dependent on quality and synchronization of all data inputs. |

Visualization: Integrated Data Analysis Workflow

Diagram Title: Multi-Datastream Analysis Workflow for Hyperglycemia

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Integrated CGM Research

| Item / Solution | Function in Research | Example/Note |

|---|---|---|

| Time Synchronization Software | Aligns timestamps from disparate devices to a universal clock. Critical for causal inference. | Custom Python/R scripts using NTP timestamps; Device APIs. |

| Data Fusion Platform | A unified software environment to import, visualize, and analyze multi-modal data streams. | Tidepool Platform (research mode), custom-built dashboards using Plotly Dash or R Shiny. |

| Standardized Meal Log Protocol | Reduces qualitative error in carbohydrate estimation, a major confounding variable. | Digital log with photo + AI-assisted carb estimate (e.g., Calorie Mama API) or structured drop-downs. |

| Signal Processing Library | Cleans raw CGM and activity data of physiological and non-physiological artifacts. | SciPy (Python) for filtering; custom algorithms for compression/sweat artifact removal. |

| Supervised ML Classifier Package | Trains models to recognize complex patterns across integrated data. | scikit-learn (Python) for Random Forest, SVM; caret (R) for generalized linear models. |

| Reference Blood Glucose Analyzer | Provides ground-truth venous or capillary measurements to validate CGM trends and calibrate models. | YSI 2300 STAT Plus (for venous), HemoCue (for capillary). |

Technical Support Center: Troubleshooting CGM Data Analysis for Drug Trial Endpoints

FAQs & Troubleshooting Guides

Q1: Our trial uses multiple CGM brands. How do we harmonize 'glycemic excursion' data when thresholds (e.g., for hyperglycemia) differ between devices? A: This is a common source of methodological error. Consensus recommends a post-processing harmonization step.

- Issue: Raw thresholds (e.g., >180 mg/dL) may be device-specific.

- Solution: Apply a standardized, consensus-defined threshold (see Table 1) to the calibrated interstitial glucose time-series data from all devices, after data collection. Do not rely on the device's native alarm or event logs.

- Protocol: 1) Extract full raw/sensor glucose data. 2) Calibrate per manufacturer. 3) Apply consensus thresholds via uniform algorithm (e.g., MATLAB/Python script) to all datasets.

Q2: When calculating Area Over the Curve (AOC) for hypoglycemic excursions, how do we handle variable baselines (e.g., 70 mg/dL vs. 54 mg/dL)? A: Variable baselines invalidate direct comparisons. The consensus mandates a fixed reference baseline.

- Issue: Using the threshold line itself (70 mg/dL) as a dynamic baseline under-represents severe hypoglycemia.

- Solution: Use a fixed, physiologically normal baseline (e.g., 100 mg/dL) for all AOC calculations, regardless of excursion type. This allows consistent quantification of glycemic burden.

- Protocol: For any excursion, calculate AOC as: ∫[Baseline (100 mg/dL) – Glucose(t)] dt over the excursion duration, where Glucose(t) is <70 mg/dL for hypoglycemia or >180 mg/dL for hyperglycemia.

Q3: We observe high inter-subject variability in Mean Amplitude of Glycemic Excursions (MAGE). Is this biological noise or a calculation error? A: Likely a pattern recognition error in identifying "valid" excursions for MAGE.

- Issue: MAGE depends on correctly identifying excursions greater than 1 standard deviation (SD) of the mean glucose. Manual or poorly parameterized peak detection introduces inconsistency.

- Solution: Implement a robust, automated peak detection algorithm with validated parameters (see Table 2) and document them in your statistical analysis plan (SAP).

- Protocol: 1) Smooth data with a moving average (e.g., 5-min window). 2) Use a consensus-defined minimum directional change (e.g., 1 SD) and a minimum time between peaks (e.g., 15 min) to flag excursions. 3) Calculate MAGE only from nadir-to-peak or peak-to-nadir segments that meet criteria.

Q4: How should we define the start and end of an excursion to ensure consistent duration measurements? A: Avoiding "soft" thresholds is key. Use a sustained crossing rule.

- Issue: Defining start/end at the exact threshold crossing is sensitive to measurement noise.

- Solution: Adopt a sustained deviation definition. An excursion starts when glucose crosses and remains beyond the threshold for ≥X minutes (consensus suggests ≥15 min). It ends when glucose returns and stays within threshold for ≥X minutes.

- Workflow Diagram:

Title: Algorithm for Defining Excursion Start & End

Q5: What are the consensus quantitative definitions for key excursion types? A: See Table 1. These definitions aim to standardize endpoints across trials.

Table 1: Consensus Definitions for Glycemic Excursions

| Excursion Type | Glucose Threshold | Minimum Duration | Key Metric | Calculation Note |

|---|---|---|---|---|

| Hyperglycemic | >180 mg/dL (10.0 mmol/L) | ≥15 minutes | AOC, Peak Value, Duration | AOC baseline = 100 mg/dL. |

| Level 2 Hypoglycemic | <54 mg/dL (3.0 mmol/L) | ≥15 minutes | AOC, Nadir Value, Duration | AOC baseline = 100 mg/dL. |

| Level 1 Hypoglycemic | <70 mg/dL (3.9 mmol/L) and ≥54 mg/dL | ≥15 minutes | Event Rate, Duration | Often excluded from severe burden calculations. |

| Postprandial | Increase from pre-meal baseline >40 mg/dL | Peak within 120 min | Peak, Time-to-Peak, AOC | Must link to meal timestamp. |

Table 2: Recommended Parameters for MAGE Calculation

| Parameter | Consensus Setting | Function |

|---|---|---|

| Data Smoothing | 5-minute moving average | Reduces high-frequency noise. |

| Minimum Directional Change | 1.0 SD of mean glucose | Filters minor fluctuations. |

| Minimum Time Between Peaks | 15 minutes | Prevents double-counting. |

| Excursion Direction | Nadir-to-Peak or Peak-to-Nadir | Must be consistent; report choice. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CGM Excursion Analysis |

|---|---|

| Standardized CGM Data Export Toolkit | Software/API (e.g., Tidepool, Glooko) to extract uniform raw/sensor glucose time-series from multiple CGM brands. |

| Calibration Algorithm Library | Validated scripts to apply manufacturer-specific calibration equations to raw data, ensuring comparability. |

| Consensus Threshold & AOC Calculator | Custom script (Python/R/MATLAB) to apply fixed thresholds (Table 1) and calculate AOC using a defined baseline (100 mg/dL). |

| Robust Peak Detection Algorithm | Implemented code (e.g., scipy.signal.find_peaks with defined height/width parameters) for consistent MAGE and excursion identification. |

| Meal/Event Annotation Platform | Digital tool for precise timestamp logging of meals, insulin, and exercise to contextualize excursions. |

Experimental Protocol: Validating a New Excursion Metric Against MAGE

Title: Protocol for Correlating a Novel Composite Excursion Score with MAGE and Patient-Reported Outcomes (PROs).

Objective: To validate a new composite glycemic excursion score (CGES) against the established MAGE metric and PROs in a 14-day observational study.

Methodology:

- Participants: n=50 individuals with T2D using blinded CGM.

- Data Collection:

- CGM: Wear a consensus-listed CGM device for 14 days.

- PROs: Daily electronic diary for symptoms (e.g., dizziness, sweating) and mood (5-point Likert scale).

- Data Processing:

- Extract CGM data at 5-minute intervals.

- Apply harmonized thresholds (Table 1).

- Calculate MAGE per parameters in Table 2.

- Calculate CGES = (Number of excursions > 40 mg/dL/30 min) * (Mean AOC of those excursions).

- Statistical Analysis:

- Pearson correlation between CGES and MAGE.

- Multiple regression analysis of CGES vs. PRO scores, controlling for mean glucose.

Workflow Diagram:

Title: Validation Workflow for a Novel Glycemic Excursion Metric

Best Practices for CGM Data Management and Pre-processing Pipelines in Multi-Center Trials

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: Our multi-center CGM data shows implausible glycemic variability indices (e.g., CV > 50%) after merging. What is the most likely source of error?

- Answer: This is a classic sign of inconsistent time-zone handling or clock synchronization errors between clinical sites. CGM timestamps must be normalized to a universal reference (e.g., UTC) before merging, with daylight saving time changes documented. Pre-processing must include a step to validate timestamp sequences for gaps or duplicates. A common culprit is site software exporting timestamps in local time without a timezone flag.

FAQ 2: During sensor data alignment with reference blood glucose, we encounter mismatches that skew MARD calculations. How should we resolve this?

- Answer: This typically arises from incorrect interpolation or misaligned pairing windows. Follow this protocol:

- Define a valid pairing window (e.g., ±5 minutes of the reference measurement).

- Do not average CGM data. Select the single CGM value closest in time to the reference value.

- If no CGM point exists within the window, record the pair as missing. Do not force a pair by extending the window.

- Implement a flagging system for reference values taken during rapid glucose change (e.g., > 2 mg/dL/min), as these are prone to sensor lag error.

FAQ 3: How do we handle significant missing data periods from different CGM device models across sites without introducing bias?

- Answer: Apply standardized, model-aware imputation rules. See the table below for a consensus-based approach:

| Missing Data Duration | Recommended Action | Rationale |

|---|---|---|

| Short Gap (< 15 min) | Linear imputation. | Acceptable for maintaining time-series continuity for metrics like AUC. |

| Medium Gap (15 min - 2 hrs) | Flag data and exclude from glycemic variability (SD, CV) calculations. May include in TIR/AUC analysis with clear notation. | Prevents artificial dampening of variability metrics. |

| Long Gap (> 2 hrs) | Segment data. Treat as separate monitoring periods. Do not impute. | Imputation would create entirely artificial data and invalidate pattern recognition. |

FAQ 4: What is the critical check for avoiding pattern recognition errors in hypoglycemia trend analysis?

- Answer: Always visualize the raw data behind any automated pattern label (e.g., "nocturnal hypoglycemia"). Automated algorithms may misclassify sensor dropouts or compression artifacts as biochemical hypoglycemia. The mandatory check is to plot the raw interstitial glucose trace with a superimposed, moving standard deviation band; true hypoglycemia shows a smooth decline, while artifacts show abrupt, noisy deviations.

FAQ 5: Our pipeline outputs different TIR (Time in Range) values for the same dataset when processed on different platforms. What standardization step is missing?

- Answer: This indicates inconsistency in the epoch processing step. CGM devices store data at 5-minute intervals, but some platforms resample or aggregate differently. The standard is:

- Start with the native 5-minute data.

- For each 24-hour period, use exactly 288 data points (24 hrs * 12 readings/hr).

- Explicitly define how partial days (e.g., the first and last day of wear) are handled: they must be excluded from TIR calculations unless they contain a full 24h of data.

- Ensure all platforms use the same mathematical definition for "range" (e.g., 70-180 mg/dL, inclusive vs. exclusive bounds).

Experimental Protocols for Cited Key Analyses

Protocol 1: Validating Multi-Center CGM Data Harmonization

- Objective: To ensure CGM data from Device A and Device B are comparable after pre-processing.

- Methodology:

- In a controlled clinical setting, co-locate Device A and Device B on the same participant (n≥30).

- Collect reference venous glucose measurements at intervals during stable and dynamic glycemia.

- Apply the proposed harmonization pipeline (timezone sync, unit conversion, gap imputation rules).

- Calculate paired metrics (MARD, Clarke Error Grid) for each device against reference after processing.

- Perform a Bland-Altman analysis comparing the paired differences (Device A vs. Reference) and (Device B vs. Reference). The mean difference between these two bias scores should not be statistically significant (p > 0.05).

Protocol 2: Detecting and Flagging Compression Artifacts to Avoid False Hypoglycemia Patterns

- Objective: To create an automated flag for signal drops due to pressure on the sensor (compression artifact) vs. true biochemical hypoglycemia.

- Methodology:

- Define Signal Characteristics: A compression artifact is identified by a rapid, unilateral decline in glucose (>2 mg/dL/min) immediately followed by an equally rapid recovery to near-pre-drop levels when pressure is relieved, often lasting <30 minutes.

- Algorithm Development: Train a simple classifier (e.g., logistic regression) on manually labeled data using features: rate of descent, rate of ascent, duration of low, area under the curve of the dip, and preceding signal noise.

- Pipeline Integration: In the pre-processing workflow, data points within a flagged artifact period are annotated but not removed. A report is generated for manual review, preventing these periods from being included in hypoglycemia pattern summaries.

Visualization: Workflow & Pathway Diagrams

Diagram Title: CGM Data Pre-processing Workflow for Multi-Center Trials

Diagram Title: Logic Tree for Hypoglycemia vs. Artifact Classification

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in CGM Data Research |

|---|---|

| ISO 15197:2013 Standard | Provides the foundational performance criteria (e.g., MARD targets) against which harmonized CGM data accuracy is validated. |

| Clarke Error Grid Analysis Tool | Software script/package to generate Clarke or Consensus Error Grids, essential for assessing clinical accuracy of merged data post-processing. |

| Bland-Altman Plot Generator | Statistical visualization tool to assess agreement between glucose values from different devices or processing methods. |

| Custom Gap Imputation Script | A transparent, rule-based script (e.g., in Python/R) implementing the study's specific gap-handling protocol, ensuring reproducibility. |

| Standardized Data Annotation Schema | A controlled vocabulary (e.g., using a .json template) for labeling events (meal, insulin, exercise, artifact) uniformly across all trial sites. |

| Time-Series Visualization Suite | Software (e.g., customized Plotly/Grafana dashboard) to plot individual traces with overlaid metrics, enabling the critical manual review step. |

Navigating Data Anomalies: Troubleshooting and Optimizing CGM Analysis for Reliable Outcomes

Systematic Approaches to Identifying and Handling Signal Dropouts and Compression Artifacts

Troubleshooting Guides & FAQs

FAQ 1: What are the definitive signatures of a signal dropout versus a physiological hypoglycemic event in CGM data?

- Answer: Signal dropouts are characterized by an abrupt, non-physiological plunge in the interstitial glucose (IG) reading to a null or near-null value, followed by an equally abrupt return to a plausible value, often without a corresponding corrective rise. Physiological events show a smoother trajectory. Key differentiators are the rate of change (ROC) and sensor diagnostic parameters (e.g., ISIG).

- Dropout: ROC exceeds -5.0 mg/dL/min over 1-2 data points. Raw ISIG value drops to near-zero.

- Hypoglycemia: ROC typically remains within physiological bounds (e.g., -2 to -3 mg/dL/min). ISIG remains detectable and correlates with the lower glucose value.

FAQ 2: How can I algorithmically distinguish compression artifacts from genuine nocturnal hypoglycemia?

- Answer: Compression artifacts ("pressure-induced sensor attenuations") occur when external pressure on the sensor site impedes interstitial fluid flow. Key discriminators include time of occurrence, signal pattern, and recovery profile.

- Compression Artifact: Most common during sleep. Signal shows a rapid, V-shaped decline and recovery (often within 30-60 minutes) upon waking/moving. No corresponding symptoms.

- Nocturnal Hypoglycemia: Signal shows a U-shaped, more gradual decline and slower recovery. May be accompanied by symptom reports or counterregulatory hormone spikes in ancillary data.

FAQ 3: What experimental protocol can I use to validate a novel artifact detection algorithm?

- Answer: A robust validation requires a meticulously labeled dataset. The recommended protocol is:

- Dataset Curation: Obtain a CGM data repository containing raw sensor values (ISIG), calibrated glucose values, and accompanying reference blood glucose measurements.

- Ground Truth Labeling: Have at least two independent clinical experts label episodes of: (a) true signal dropout, (b) compression artifact, (c) physiological extreme, (d) normal signal. Resolve discrepancies via a third adjudicator.

- Algorithm Testing: Apply your detection algorithm to the dataset. Compare its labels against the human-generated ground truth.

- Performance Quantification: Calculate standard metrics (Sensitivity, Specificity, Precision, F1-Score) for each artifact class.

FAQ 4: What are the best practices for handling identified artifacts in time-series analysis for drug efficacy studies?

- Answer: Do not simply delete artifacts. Implement a tiered handling strategy:

- Tier 1 (Flag & Review): Flag all data points identified as potential artifacts. For pivotal outcomes, manually review flagged segments against patient diaries (for compression) and device logs.

- Tier 2 (Context-Aware Imputation): For non-pivotal exploratory analysis, consider context-aware imputation (e.g., linear interpolation) only for very short dropouts (<15 min). Never impute compression artifacts or prolonged dropouts, as they represent a complete loss of data integrity.

- Tier 3 (Sensitivity Analysis: Conduct primary analysis on "cleaned" data (with artifacts removed as missing), and a sensitivity analysis where artifact periods are treated as missing completely at random (MCAR) using appropriate statistical methods.

Data Presentation

Table 1: Quantitative Signatures of Common CGM Artifacts vs. Physiological Events

| Feature | Signal Dropout | Compression Artifact | Physiological Hypoglycemia |

|---|---|---|---|

| Typical Shape | Rectangular, abrupt null | Sharp V-shape | Gradual U-shape |

| Duration | Variable (mins to hours) | Usually 30-90 min | Usually >60 min |

| Max Rate of Change | Effectively infinite | Often < -5 mg/dL/min | Typically -1 to -3 mg/dL/min |

| Raw Signal (ISIG) | Falls to sensor noise floor | Drops proportionally | Correlates with low glucose |

| Recovery Profile | Abrupt, step-change | Rapid upon movement | Gradual with treatment |

| Common Context | Sensor communication failure, displacement | Sleep, tight clothing | Insulin dosing, exercise |

Table 2: Performance Metrics of Example Artifact Detection Algorithms in a Research Dataset (n=10,000 hrs of CGM)

| Algorithm Type | Target Artifact | Sensitivity (%) | Specificity (%) | F1-Score | Reference |

|---|---|---|---|---|---|

| ROC-Based Filter | Dropout & Compression | 88.2 | 94.5 | 0.89 | Johnson et al., 2022 |

| Machine Learning (Random Forest) | Compression Only | 95.1 | 98.7 | 0.96 | Chen & Patel, 2023 |

| Raw Signal Variance Analysis | Dropout Only | 99.8 | 99.5 | 0.997 | Abbott Libre Guide |

| Multi-Feature Neural Network | All Artifacts | 92.4 | 97.3 | 0.94 | Zhou et al., 2023 |

Experimental Protocols

Protocol A: Inducing and Measuring Compression Artifacts in a Clinical Research Setting

- Objective: To systematically characterize the signal response of CGM systems to applied external pressure.

- Materials: CGM sensor, pressure application device (calibrated plunger), continuous reference monitor (e.g., YSI or blood sampling), data logger.

- Procedure: a. Insert CGM and reference sensors in adjacent tissue on the upper arm. b. After a 24-hour run-in period, apply standardized pressure (e.g., 50 mmHg, 100 mmHg) via the plunger directly over the CGM sensor for a set period (e.g., 20 minutes). c. Simultaneously, record CGM data (raw ISIG and glucose) and reference glucose values at 1-minute intervals. d. Release pressure and continue monitoring for 60 minutes to capture recovery. e. Repeat under different glycemic conditions (euglycemia, hyperglycemia).

- Analysis: Plot CGM signal deviation from reference versus time and pressure. Calculate latency, magnitude of attenuation, and recovery time constant.

Protocol B: Validating an Automated Artifact Detection Algorithm

- Objective: To assess the performance of a novel detection algorithm against expert-labeled ground truth.

- Materials: Historical CGM dataset (≥50 subjects, ≥2 weeks each), algorithm software, blinded review platform.

- Procedure: a. Blinded Expert Review: Two independent clinicians review all CGM traces, flagging episodes of dropout, compression, and physiological extremes. A third adjudicator resolves conflicts to create a final "Gold Standard" dataset. b. Algorithm Execution: Run the detection algorithm on the raw CGM data to generate its own set of flags. c. Alignment: Temporally align algorithm flags with Gold Standard episodes (e.g., using a 5-minute tolerance window). d. Statistical Calculation: Generate a confusion matrix for each artifact class. Calculate Sensitivity, Specificity, Precision, and F1-Score.

- Analysis: Report metrics with 95% confidence intervals. Use the F1-Score as the primary metric for class-imbalanced datasets.

Visualizations

Title: CGM Artifact Detection and Classification Workflow

Title: Decision Logic for Differentiating CGM Artifacts

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CGM Artifact Research |

|---|---|

| High-Frequency Reference Analyzer (e.g., YSI 2900) | Provides near-continuous, highly accurate blood glucose measurements for ground-truth validation during artifact induction studies. |

| Controlled Pressure Application Device | A calibrated system (e.g., force-controlled plunger) to apply reproducible external pressure on the CGM sensor site for artifact characterization. |

| Data Synchronization Logger | Hardware/software to temporally align CGM data streams, reference analyzer outputs, and event markers (pressure on/off, patient activity) with millisecond precision. |